The Software Product Development Lifecycle: A Complete Guide for Startups and Scaleups

Every phase, every decision point, and the partnership model that keeps quality and velocity aligned from discovery to deployment.

- The software product development lifecycle combines business strategy, engineering execution, and continuous evolution into one coherent, repeatable framework.

- Discovery is the single most important phase — validating assumptions before code is written dramatically reduces the cost of failure downstream.

- Testing must be integrated continuously across all phases; defects found in production cost up to 100 times more to fix than those caught in design.

- DevOps, CI/CD, and shift-left security turn the lifecycle into a continuous loop that delivers quality at speed without sacrificing reliability.

- An embedded boutique partner aligned to your culture and stack accelerates every phase — from discovery through deployment and ongoing evolution.

- Maintenance and iteration represent most of a product’s lifespan; allocating permanent capacity for technical debt and reliability is a strategic business decision.

Table of Contents

- What is the software product development lifecycle?

- Why does the lifecycle matter for business and engineering?

- What is the difference between SDLC and the product lifecycle?

- What are the primary stages in the lifecycle?

- How does the Discovery phase define the project’s future?

- How do you translate Requirements into actionable technical scope?

- How should Design and Architecture impact the product lifecycle?

- What defines the Development phase in modern workflows?

- Why is Testing and QA integrated throughout the process?

- Comparing testing investment: early vs. late

- How to handle Deployment and the Launch process?

- What KPIs measure success after the launch?

- Why is Maintenance and Iteration the longest phase?

- How do DevOps and CI/CD enable a fluid lifecycle?

- Why shift-left security is critical to the lifecycle?

- Mapping business needs to lifecycle support

- What are the most common pitfalls in the lifecycle?

- Frequently asked questions

Building a successful software product is rarely a straight line from idea to launch. For today’s startups and scaleups, the biggest challenge is often navigating the complex journey between a market opportunity and a product that customers actually use, pay for, and recommend. The software product development lifecycle is the framework that turns this journey from guesswork into a repeatable, measurable process — one that aligns business strategy, engineering execution, and ongoing evolution into a single coherent system.

In this guide, your team will get a clear, practical view of every phase, the decisions that matter most at each stage, and how working with a boutique tech partner like Sentice can help you move faster without sacrificing quality. Whether you are launching your first MVP or scaling an established platform, the principles here apply at every stage of the journey.

What is the software product development lifecycle?

The software product development lifecycle is a comprehensive, cyclical framework that goes far beyond writing code. It bridges market discovery, business strategy, engineering execution, and continuous evolution into one connected process. Unlike a narrow engineering workflow, this lifecycle is interdisciplinary by design — it brings product managers, designers, engineers, security specialists, and operations teams together around a shared goal: delivering measurable value to users from the first concept through retirement.

Standards such as the NIST Secure Software Development Framework (SSDF) emphasize that quality and security must be embedded from the very first phase, not bolted on at the end. Treating the lifecycle as an ecosystem — not a checklist — is what separates products that scale from products that stall. When every discipline operates within the same framework, the result is a product that is technically sound, market-fit, and operationally resilient.

A process ends; a lifecycle loops. The software product development lifecycle is deliberately cyclical — each iteration of discovery, delivery, and measurement feeds directly back into the next planning cycle, compounding learning and improving quality over time.

Why does the software product development lifecycle matter for business and engineering?

Skipping a structured lifecycle is one of the most expensive mistakes a tech organization can make. Without it, teams drift into feature bloat, budgets slip, and roadmaps disconnect from real customer needs. A well-defined lifecycle aligns stakeholder expectations — ROI, timelines, market windows — with the engineering reality of velocity, technical debt, and operational load.

It creates clear decision gates where leadership can say “go,” “pivot,” or “stop” before sunk costs grow. For CEOs and CTOs, this means predictable delivery; for engineering leads, it means fewer late-stage surprises. Ultimately, the lifecycle is what turns ambition into a defensible product strategy that finance, sales, and engineering can all stand behind. The organizations that invest in lifecycle discipline consistently outpace those that treat structure as optional — particularly as they grow and the cost of coordination rises.

Clear phases and decision gates ensure that engineering priorities stay connected to revenue, market timing, and customer outcomes throughout the product’s life.

Structured phases reduce late-stage surprises by surfacing risks early, enabling teams to deliver on commitments with stable velocity and measurable quality.

Each lifecycle iteration produces data that feeds directly back into the next planning cycle, compounding quality, performance, and customer satisfaction over time.

What is the difference between SDLC and the software product development lifecycle?

SDLC (Software Development Life Cycle) is traditionally an engineering-focused process: requirements, design, coding, testing, deployment, and maintenance. It describes how software gets built. The software product development lifecycle is broader — it wraps SDLC inside a wider business framework that includes market research, customer discovery, positioning, go-to-market planning, post-launch growth, and eventual retirement.

A product can have a flawless SDLC and still fail if it doesn’t fit the market. Modern teams need both: engineering precision and comprehensive product thinking. This is exactly where partners who deliver end-to-end software solutions add real leverage — combining product strategy, design, and full-stack engineering in one aligned team rather than fragmented vendors pulling in different directions.

- Requirements gathering and analysis

- System design and architecture

- Coding and unit testing

- Integration and system testing

- Deployment and release management

- Maintenance and bug fixing

- Market research and customer discovery

- Product positioning and strategy

- Go-to-market planning and execution

- Post-launch growth and retention

- Business KPI tracking and iteration

- Product retirement and migration planning

What are the primary stages in the software product development lifecycle?

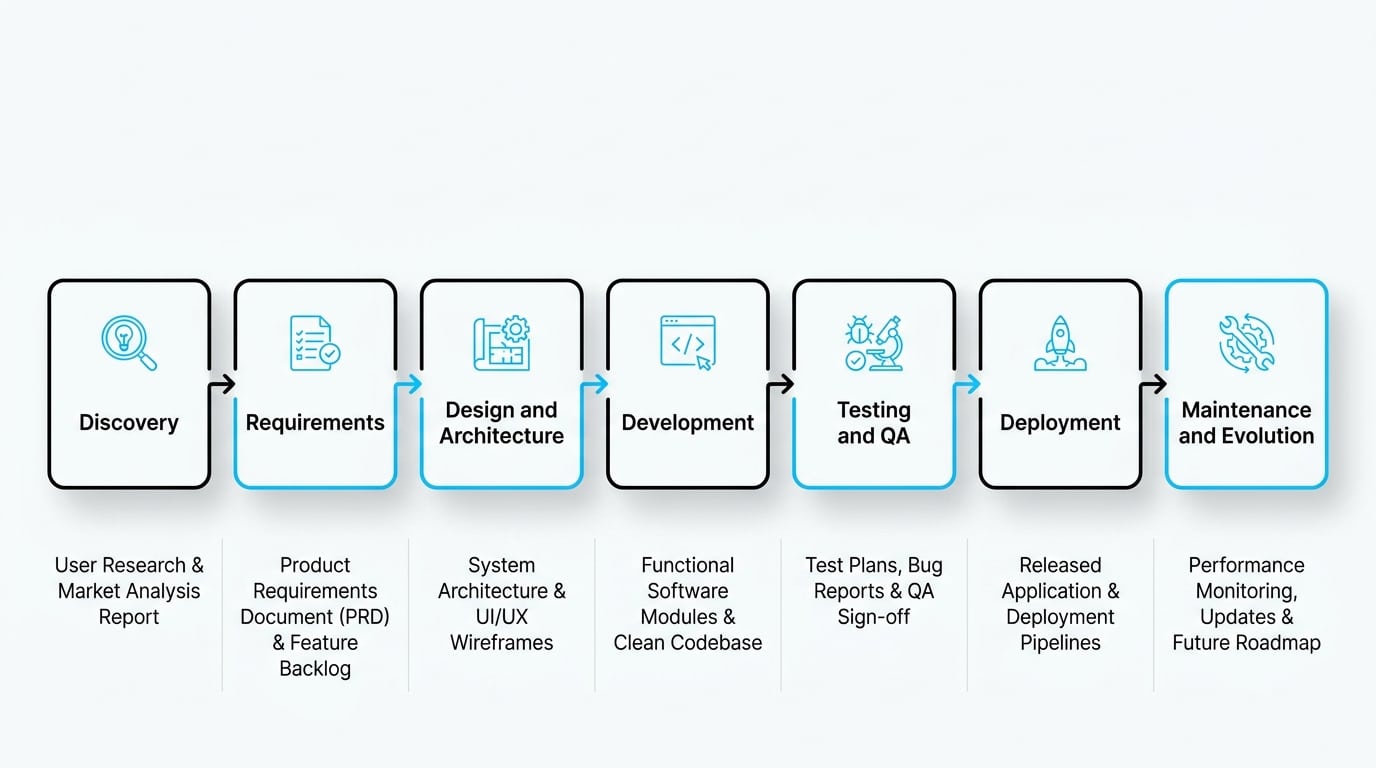

While different organizations use different names, most modern lifecycles share the same backbone. The phases are rarely strictly linear; teams loop back, run discovery in parallel with development, and treat post-launch as another input to planning. The table below maps each stage to its core purpose and primary deliverable, giving your team a shared reference point regardless of the methodology you use.

| Stage | Core Purpose | Primary Deliverable |

|---|---|---|

| Discovery | Validate the problem and audience | Validated problem statement |

| Requirements | Translate insights into scope | Prioritized backlog and acceptance criteria |

| Design and Architecture | Define UX and system structure | Prototypes and architecture diagrams |

| Development | Build incrementally | Working software increments |

| Testing and QA | Verify quality continuously | Test reports and defect closure |

| Deployment | Release safely to production | Live, monitored product |

| Maintenance and Evolution | Improve based on real usage | Updated versions and KPI gains |

In high-performing teams, discovery never fully stops — it runs as a lightweight, continuous track alongside development. While one sprint delivers working increments, a parallel track is already validating assumptions for the next cycle, reducing the time between learning and building.

How does the Discovery phase define the project’s future?

Discovery is where you decide whether to build something at all. The goal is to identify real user pain points, map alternatives, and validate critical assumptions before a single line of production code is written. Skipping or rushing this phase is the single biggest predictor of product failure. Strong discovery produces clarity on who the user is, what job they are hiring the product to do, and what success looks like in measurable terms. It also surfaces the riskiest assumptions early, when the cost of being wrong is still low.

How to define a Problem Statement vs. a Value Proposition

A problem statement describes the user’s pain in their own context — specific, observable, and frequent enough to matter commercially. A value proposition is your team’s hypothesis about how the product will resolve that pain better than existing alternatives. Keeping them separate is critical: confusing the two leads to solutions in search of problems, which is one of the most common and costly errors a product team can make. Write both before any design work begins, and revisit them after each round of user research.

Why user research is the foundation of all development phases

User research informs requirements, shapes UX, prioritizes the backlog, and defines the KPIs your team will track after launch. Without it, every later phase is built on guesswork. Even lightweight research — five structured interviews, a clickable prototype test, or a landing-page conversion experiment — dramatically reduces downstream rework and dramatically increases the signal-to-noise ratio in your backlog. The investment in discovery pays back multiples in every subsequent phase.

How do you translate Requirements into actionable technical scope?

Requirements are where many teams get stuck — drowning in documents that nobody reads and that become stale within a sprint. The modern approach is outcome-based: define the result you want, the user scenario, the acceptance criteria, and the measurable signal of success. Standards like ISO/IEC/IEEE 29148 provide a solid baseline for requirements engineering, but the real skill is keeping documentation lean enough to support fast iteration while precise enough to prevent ambiguity in development and testing. Requirements that are too vague create rework; requirements that are too verbose create waste.

Balancing functional requirements with non-functional necessities

Functional requirements describe what the system does; non-functional requirements describe how well it does it — covering performance, scalability, security, accessibility, and availability. Ignoring the non-functional side is how products become unmaintainable at scale. Defining SLIs and error budgets at the requirements stage, rather than after the first production incident, sets a far more defensible quality baseline for your entire engineering team.

What is the Definition of Ready?

A Definition of Ready is a shared team checklist that confirms a backlog item is clear enough to enter development: scope is defined, dependencies are known, acceptance criteria are written, and design assets are attached where needed. It prevents half-baked tickets from clogging sprints and reduces the ambiguity that leads to mid-sprint clarification cycles and context-switching overhead. Teams that enforce a Definition of Ready consistently report higher sprint predictability and lower rework rates.

Research from the Standish Group’s CHAOS Report consistently shows that incomplete or misunderstood requirements are among the top three contributors to project failure. Clear, outcome-based requirements documented before a sprint starts reduce mid-sprint changes by a measurable margin across teams of all sizes.

How should Design and Architecture impact the product lifecycle?

Design and architecture decisions made in the first weeks shape costs for years. UX design — wireframes, prototypes, usability testing — finalizes how users will experience the product before backend complexity multiplies. In parallel, architectural decisions about data models, APIs, scalability patterns, and integration boundaries are set for the long term. Getting these decisions right requires senior engineers who have seen what breaks at scale and what stays flexible under pressure.

An embedded boutique partner aligned to your stack and culture can be especially valuable here — not because design and architecture are exotic disciplines, but because the cost of a wrong decision compounds with every feature that inherits it. Investing in experienced architectural thinking upfront is one of the highest-ROI decisions your organization can make before the first sprint begins.

Why initial architectural decisions are the hardest to change later

Choosing the wrong database, coupling services too tightly, or hardcoding tenant logic are decisions that compound over time. Each new feature inherits those constraints, and refactoring becomes exponentially more expensive as the codebase grows and team knowledge fragments. The patterns that feel “fast enough for now” during an early sprint often become the dominant source of velocity loss twelve months into the product’s life — a pattern that a senior architecture review can identify and mitigate before it takes root.

What defines the Development phase in modern workflows?

Modern development is iterative, automated, and continuously validated. Teams build in small increments, merge frequently, and rely on automated pipelines for testing and deployment. Code reviews, branching strategies, dependency management, and consistent coding standards keep quality high without slowing velocity. The principle is straightforward: small, frequent, reversible changes beat large, infrequent, risky ones every time.

This is also where embedded partnership models prove their worth. Senior engineers who understand your stack and culture deliver code that integrates cleanly, mentors junior team members effectively, and maintains the architectural integrity established in the design phase — rather than creating parallel silos that diverge over time. Teams that operate with this kind of end-to-end ownership move faster and accumulate less technical debt per sprint than fragmented models where responsibility is divided across vendors.

- Small, frequent commits to trunk or main

- Automated unit and integration tests on every push

- Peer code review with documented standards

- Dependency pinning and vulnerability scanning

- Feature flags for safe incremental exposure

- Clear Definition of Done before sprint close

- Long-lived feature branches that create merge conflicts

- Skipping code review under sprint pressure

- Accumulating TODO comments instead of tickets

- Hardcoded configuration and environment assumptions

- Missing observability instrumentation at build time

- Treating security as a post-development concern

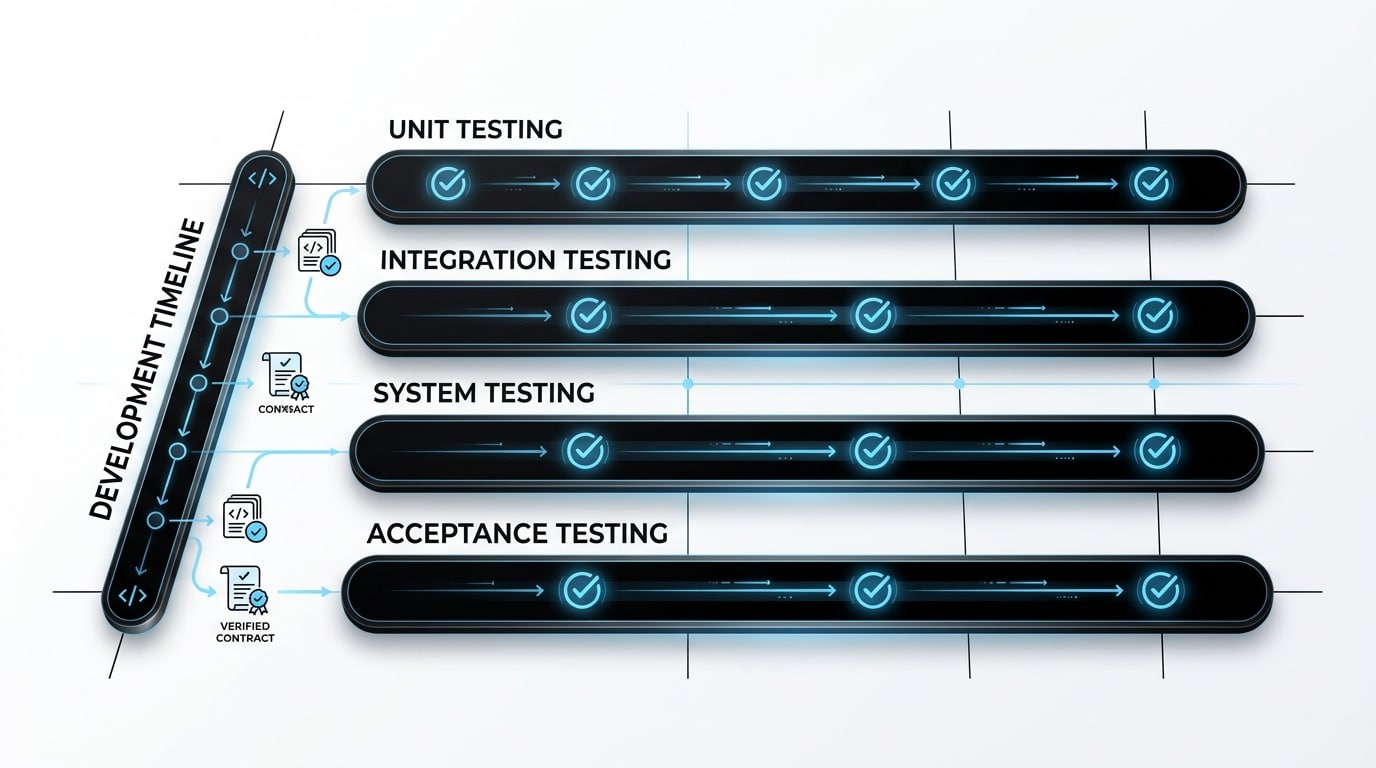

Why is Testing and QA integrated throughout the process?

Testing is not a final gate — it is a continuous validation layer running alongside development from the first sprint. According to ISTQB’s official guidance on test levels, mature teams operate across component, integration, system, and acceptance testing simultaneously. Unit tests catch regressions in seconds; integration tests verify contracts between services; system tests validate end-to-end flows; and acceptance tests confirm that the business value described in the requirements has actually been delivered.

This layered approach ensures reliability regardless of whether your team uses Agile, DevOps, or a hybrid methodology. It also dramatically reduces the cost of fixing defects compared to finding them after release — a dynamic that has been well-documented across the industry and that underpins every serious shift-left testing strategy. Teams that build testing discipline early find that quality becomes a natural outcome of their process rather than a crisis they manage at the end of each cycle.

Comparing testing investment: early vs. late

The economics of testing are well-established: defects caught early are an order of magnitude cheaper to fix than those caught in production. This cost curve is the core argument behind shift-left testing strategies and continuous integration pipelines. The table below illustrates the typical cost-and-impact pattern that every engineering leader should have visible when making investment decisions about test automation and QA capacity.

| Defect Found In | Relative Fix Cost | Business Impact |

|---|---|---|

| Requirements and Design | 1x | Minimal — adjust scope before build |

| Development | 5x–10x | Sprint slip, rework overhead |

| QA and Pre-release | 10x–20x | Launch delay, stakeholder friction |

| Production | 50x–100x | Customer churn, reputation damage, incident cost |

Shift-left testing does not mean writing more tests — it means writing the right tests at the right time. Start with a clear acceptance criterion for every story, automate the smoke suite before the first release, and add regression coverage incrementally as the product matures. Quality compounds when it is treated as a continuous activity rather than a pre-launch sprint.

How to handle Deployment and the Launch process?

Deployment is the moment code becomes a product, but launch is a controlled process — not a single event. Strong teams use feature flags, canary releases, and gradual rollouts to limit blast radius when something unexpected surfaces. Runbooks, monitoring dashboards, and on-call rotations are prepared in advance, so the team is never scrambling in the dark during a critical moment.

The goal is to make every release reversible: if key metrics degrade after a rollout, you can roll back in minutes, not hours. Pairing deployment automation with clear SLIs and SLOs — as outlined in the Google SRE service best practices — turns launch day from a nervous milestone into a routine operation. Teams that reach this level of deployment maturity release more frequently and with measurably lower incident rates than those relying on manual, infrequent release processes.

What KPIs measure success after the launch?

Once the product is live, KPIs become the language of decision-making. The right metrics depend on the product’s stage and goals, but they generally span adoption (signups, activation rate), engagement (DAU/MAU, retention cohorts), business outcomes (revenue, conversion, churn), and reliability (p95 latency, error rate, uptime). Connecting these metrics directly to backlog prioritization is what transforms raw data into purposeful product progress.

How to differentiate between metrics for an MVP vs. a mature product

For an MVP, focus on activation and qualitative feedback — does the product solve the core problem at all? Quantitative data is sparse and noisy at this stage, so depth of user insight matters more than breadth. For a mature product, shift to retention, expansion revenue, NPS, and operational efficiency. Tracking the wrong metrics at the wrong stage leads to misleading conclusions that can misallocate engineering capacity for entire quarters at a time.

Turning data into a new backlog for the next iteration

Each KPI movement should produce a hypothesis: why did retention drop in week two? Why is activation consistently lower for one customer segment? Those hypotheses become experiments; experiments become backlog items with measurable success criteria — closing the loop between data and development, and ensuring that every sprint is directed by evidence rather than assumption.

Why is Maintenance and Iteration the longest phase?

Most of a product’s life is spent in maintenance and evolution — bug fixes, performance improvements, security patches, refactoring, and new features driven by real-world usage. This phase is where technical debt either compounds or gets controlled, and where customer trust is either reinforced or eroded by the reliability and quality of each update. Allocating dedicated capacity for technical debt and reliability work — rather than treating it as optional backlog — is the difference between a product that ages gracefully and one that becomes a liability to your business.

When is it time for Refactoring or a full Re-platforming?

The signals are consistent across product teams: development velocity drops measurably sprint-over-sprint, recurring incidents cluster in the same architectural areas, onboarding new engineers takes longer than it should, and infrastructure costs grow faster than revenue. When the cost of change consistently exceeds the value of new features, it is time to invest in structural improvement — whether that means targeted refactoring or a phased re-platforming strategy planned carefully with your engineering leadership.

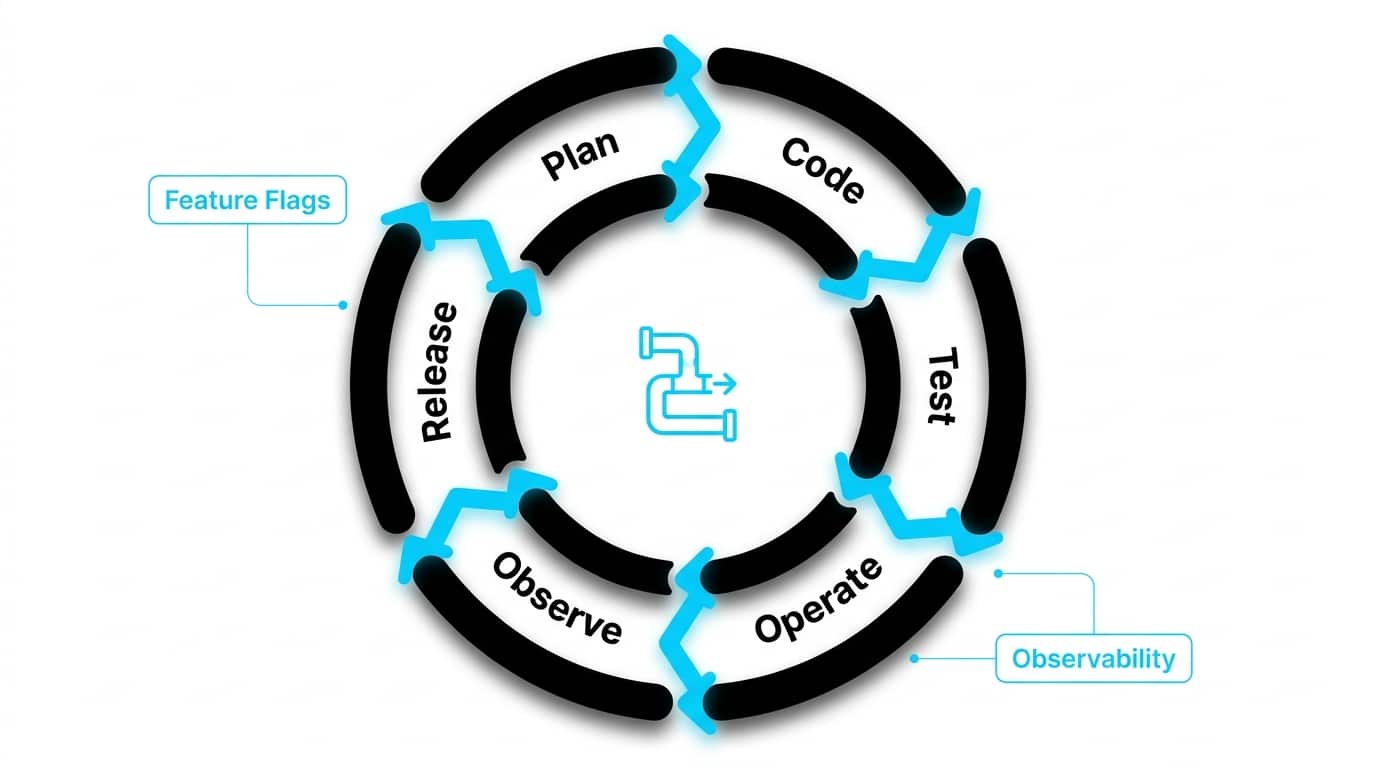

How do DevOps and CI/CD enable a fluid lifecycle?

DevOps and CI/CD turn the lifecycle into a continuous loop: plan, code, test, release, operate, observe, learn, repeat. Automation removes the manual handoffs that historically slowed releases and introduced errors at every transition point. Frameworks like the NIST NCCoE DevSecOps practices demonstrate how security, quality, and speed can coexist when integrated into the pipeline rather than treated as separate gates that slow delivery at the end of each cycle.

DORA metrics — deployment frequency, lead time for changes, change failure rate, and mean time to recover — provide your team with an objective benchmark for pipeline maturity. High-performing engineering organizations deploy multiple times per day with change failure rates below 15% and recovery times measured in under an hour. These outcomes are achievable for startups and scaleups that invest in pipeline discipline and observability from the beginning of the lifecycle.

What is the difference between Deployment and Release?

Deployment puts code on production servers; release exposes it to users. Decoupling them — through feature flags or progressive delivery — lets your team ship continuously while controlling user exposure precisely. This separation is what enables teams to maintain a high deployment frequency without accepting the risk of exposing every change to 100% of users simultaneously.

Why Observability is the eyes and ears of the product team

Logs, metrics, and distributed traces give your team the ability to diagnose issues in minutes rather than days. Without observability, every production incident is a guessing game that consumes disproportionate engineering time. With it, your team learns from production faster than competitors can ship new features — turning operational data into a genuine competitive advantage rather than a reactive burden.

Why shift-left security is critical to the lifecycle?

Security cannot be a final checkbox. Shift-left security means embedding threat modeling, dependency scanning, secrets management, and secure coding standards from the requirements phase onward. Treating security as an afterthought creates technical debt that becomes harder and more expensive to remove with every release that builds on top of it. The compounding effect of unresolved security debt is one of the most significant and underestimated risks in product development.

Modern teams integrate SAST (static application security testing), DAST (dynamic application security testing), and software supply chain controls directly into CI/CD pipelines, so vulnerabilities are caught when they are cheapest to fix — at commit time, not after a breach. For startups operating in regulated industries or targeting enterprise customers, a documented shift-left security practice is increasingly a commercial requirement, not just an engineering preference.

First, add dependency vulnerability scanning to every pull request — automated tools make this trivially easy. Second, run a lightweight threat model at the start of each major feature, identifying trust boundaries and sensitive data flows. Third, enforce secrets management from day one: no credentials in code, no exceptions. These three steps alone address the majority of common vulnerability classes before they reach production.

Mapping business needs to lifecycle support

For founders and tech leaders evaluating how an embedded partner fits into the lifecycle, the table below maps common business needs to the practical capabilities that address them — and how a boutique partnership model, like the one Sentice offers, delivers on each one without the overhead of large consultancies or the risk of fragmented vendors.

| Business Need | How a Boutique Partner Helps |

|---|---|

| Scaling capacity without losing quality | Embedded senior engineers aligned to your culture and stack, integrated as a real extension of your team |

| Predictable delivery timelines | Dedicated team with stable velocity, clear ownership, and transparent progress reporting |

| End-to-end product execution | Discovery, design, development, QA, and operations delivered in one aligned, accountable team |

| Reducing technical debt | Senior architecture review, disciplined refactoring cycles, and quality guardrails built into the process |

| Faster time-to-market for MVPs | Lean discovery and focused incremental delivery that ships working software in weeks, not months |

What are the most common pitfalls in the software product development lifecycle?

The most damaging mistakes are predictable — and preventable. Skipping discovery and building features that nobody validated is the single most common source of product failure. Letting scope expand without explicit trade-offs burns engineering capacity without proportional business value. Deploying without monitoring means flying blind in production. Ignoring metrics after launch means growth is managed by intuition rather than evidence. Allowing the backlog to accumulate without prioritization turns every sprint into a negotiation rather than focused delivery.

The antidote is structural: explicit validation gates at each phase transition, outcome-based prioritization tied to measurable KPIs, monitoring defined before deployment begins, and a permanent capacity allocation for quality, security, and technical debt. Teams that build these guardrails into their lifecycle deliver consistently and predictably; teams that treat them as optional repeat the same expensive mistakes on every project, regardless of how talented their engineers are.

According to the Standish Group, only 31% of software projects are delivered on time and on budget. The differentiators between successful and challenged projects consistently come down to clarity of requirements, stakeholder alignment, and structured lifecycle governance — not the size of the engineering team or the sophistication of the technology stack.

Frequently asked questions

What is the software product development lifecycle in short?

It is the end-to-end process that takes a software product from idea through discovery, design, development, testing, launch, and ongoing evolution — combining business strategy and engineering execution into one repeatable framework. It is cyclical by design: each iteration produces learning that feeds the next cycle.

What is the difference between SDLC and the software product development lifecycle?

SDLC is the engineering subset focused on how software gets built — requirements, design, code, test, deploy, maintain. The product development lifecycle is broader and includes market research, product strategy, go-to-market planning, post-launch growth, and eventual product retirement. A product can have a technically flawless SDLC and still fail commercially if the broader lifecycle disciplines are absent.

How many stages are there in the software product development lifecycle?

Most modern frameworks describe seven core stages: discovery, requirements, design and architecture, development, testing and QA, deployment, and maintenance and evolution. In practice, these stages overlap and iterate rather than run strictly in sequence — discovery continues alongside development, and post-launch data feeds directly back into planning.

What is the difference between an MVP and a prototype?

A prototype demonstrates an idea or interaction — often without working code — to explore a concept or validate a UX hypothesis quickly and cheaply. An MVP is a real, functioning product with the smallest feature set needed to deliver genuine value and gather learning from actual paying or active users. Prototypes reduce design risk; MVPs reduce market risk.

When should discovery happen before development?

Always before any significant investment of engineering time. Discovery validates the problem, the audience, and the proposed value before code is written, dramatically reducing the risk of building something that does not fit the market. Even a one-week discovery sprint — five user interviews, a competitive landscape review, and a validated problem statement — pays back its cost many times over in reduced rework downstream.

How do you measure success after launch?

Define your KPIs before launch and tie them directly to your product stage. For early-stage products, prioritize activation rate and qualitative user feedback — does the product solve the stated problem? For mature products, focus on retention cohorts, expansion revenue, NPS, and reliability metrics. Connect every metric movement to a hypothesis and a corresponding decision in your backlog to ensure data drives action.

How does shift-left security fit into the lifecycle?

Shift-left security integrates security activities — threat modeling, dependency vulnerability scanning, secure coding standards, and secrets management — into the earliest phases of the lifecycle. The goal is to catch vulnerabilities when they are cheapest to fix: at the requirements stage or at commit time, rather than after a production incident or a third-party security audit. It also reduces the adversarial dynamic between security and engineering teams by making security a shared, continuous responsibility rather than a late-stage gate.

How does a boutique partner differ from a large consultancy for lifecycle support?

A boutique partner like Sentice embeds directly into your product organization as a real extension of your team — culture-aligned, stack-aligned, and committed to your roadmap across the full lifecycle. Large consultancies typically operate through fixed-scope engagements with rotating staff, which fragments knowledge and creates handoff risk. A boutique model means the same senior engineers who shaped your architecture are still contributing six months later, with accumulated context that compounds into faster, higher-quality delivery over time.