AI Development Services: A Practical Guide for Scaleups and Enterprises

Everything you need to evaluate, select, and scale a production-grade AI development engagement — from data readiness to MLOps.

- Professional AI development spans the full lifecycle — use case discovery, data strategy, model engineering, integration, and continuous MLOps monitoring.

- The right AI partner aligns on business KPIs and problem framing before discussing models, not after, reducing the risk of wasted investment.

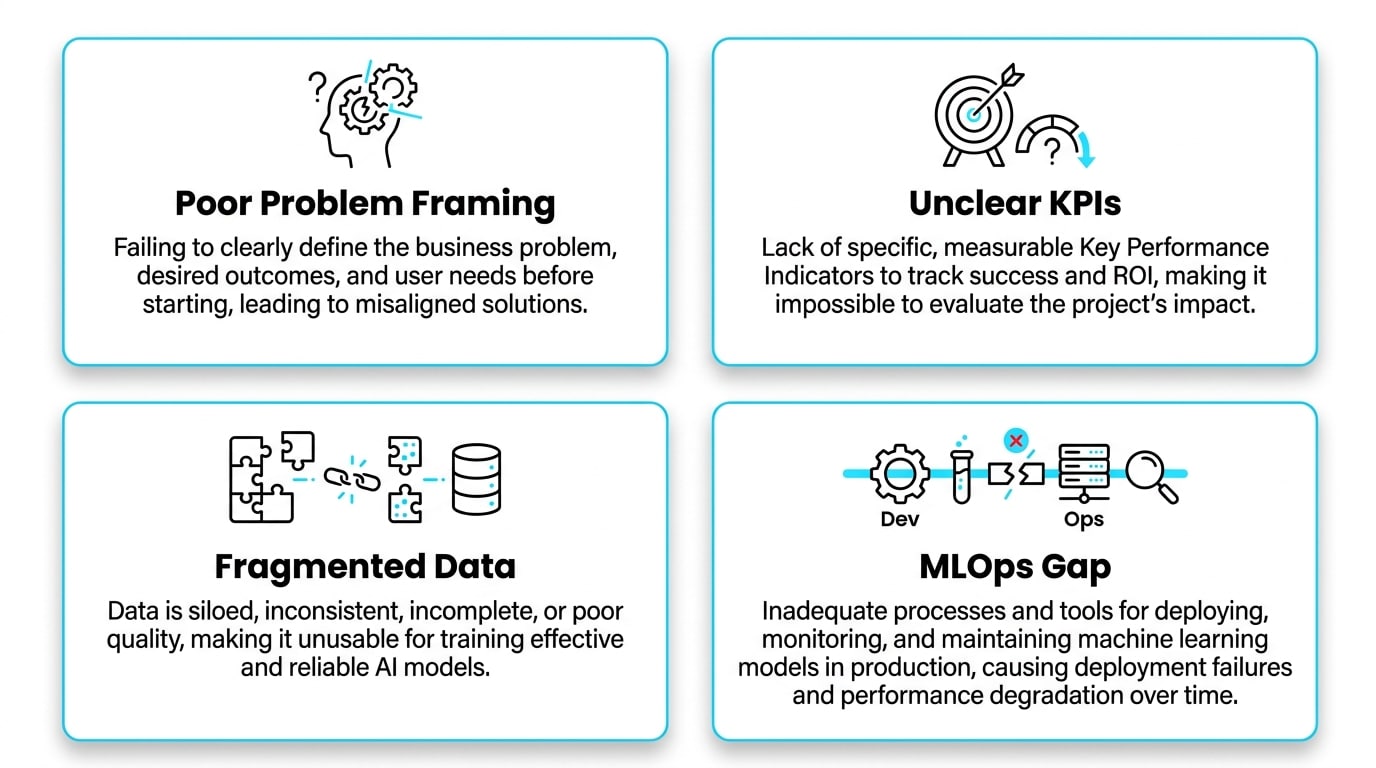

- Most enterprise AI projects fail due to poor problem framing, fragmented data, and the MLOps gap between a working notebook and a working production system.

- RAG, fine-tuning, and prompting each serve distinct purposes; the strongest enterprise portfolios combine all three based on the specific use case.

- Privacy-by-Design and MLOps are not optional extras — they are the infrastructure that determines whether an AI system delivers long-term, measurable value.

- Custom AI development delivers competitive advantage when domain-specific data, compliance requirements, or proprietary workflows are central to the problem.

Table of Contents

- Essential Components of Professional AI Development Services

- How to Select the Ideal AI Development Partner

- AI Consulting vs. AI Development Services

- Why Enterprise AI Projects Often Fail

- The Full Cycle of AI Development Services

- Process Map: Discovery to Continuous Improvement

- Custom AI Development vs. Off-the-Shelf Tools

- When to Leverage Generative AI and LLMs

- Why RAG is Essential for Enterprise GenAI

- Architecting AI Agents for Workflow Automation

- Common Mistakes Teams Make with AI Agents

- Measuring ROI and Project Success

- Comparing Decision Approaches: Prompting vs. RAG vs. Fine-tuning

- Managing Data Privacy and Security in AI Solutions

- The Role of MLOps in Long-Term Value

- Future-Proofing Your AI Strategy

- Mapping Business Needs to Practical Capabilities

- Frequently Asked Questions

For today’s scaleups and enterprises, the pressure to embed artificial intelligence into core operations has never been greater — but the gap between an exciting demo and a production-grade system is where most initiatives stall. Choosing the right approach to AI development services is no longer about chasing the latest model; it is about building durable, secure, and measurable systems that align with real business outcomes.

Whether you are exploring your first proof of concept or scaling an existing ML portfolio, the path forward requires a partner who understands the full lifecycle: from data readiness to MLOps, from governance to ROI. This guide breaks down what professional AI development truly involves, how to evaluate vendors, and how to avoid the pitfalls that derail so many enterprise AI projects. Throughout, we reference insights from Sentice, a boutique tech partner with a track record of embedding senior engineering teams directly into product organizations to deliver end-to-end AI solutions.

Essential Components of Professional AI Development Services

Modern AI development services cover the entire lifecycle of an intelligent system — not just model training. A complete engagement includes use case discovery, data preparation, model engineering, system integration, and production MLOps with continuous monitoring. The shift in 2024–2025 is unmistakable: organizations no longer want isolated pilots; they want sustainable production environments that deliver predictable value.

Today’s services typically blend classical machine learning — classification, forecasting, anomaly detection — with Generative AI components such as LLM-powered agents, retrieval pipelines, and document understanding. A boutique tech partner helps you align these capabilities with business KPIs, ensuring that every layer — data, model, application, infrastructure — is engineered for scale rather than for a one-off demo.

Defining the business problem, success metrics, and data landscape before any model work begins. This stage determines whether an initiative is worth pursuing and prevents costly misdirection.

Selecting, training, evaluating, and iterating on machine learning or generative AI models against agreed KPIs. Includes experiment tracking, evaluation pipelines, and bias assessment.

The operational discipline that keeps a deployed model healthy: automated retraining, drift detection, performance logging, version control, and incident response protocols.

The highest-value AI systems are designed for production from day one. Teams that treat the notebook as the destination — rather than the starting point — routinely underestimate integration complexity, latency requirements, and the operational overhead of keeping a model accurate over time.

How to Select the Ideal AI Development Partner for Your Business

Selecting a partner is less about portfolio size and more about fit. The right vendor demonstrates business-problem alignment first, then technical depth. Look for teams that begin with Discovery and KPI definition before discussing models, that have proven MLOps expertise, and that can articulate clear security protocols for sensitive data. A strong partner will also walk you through how a Proof of Concept translates into an MVP, and how that MVP becomes a hardened production system.

A boutique partnership model — where senior engineers operate as an embedded extension of your team — tends to deliver better alignment than large outsourcing shops. This is one of the relative advantages organizations gain when working with Sentice: senior, culture-aligned teams that integrate directly into your workflow rather than operating as a distant vendor.

Assessment Frameworks for AI Vendors

Strong vendors operate with a production-first mindset. Ask candidates to map their process against an established framework such as the NIST AI Risk Management Framework, which defines Govern, Map, Measure, and Manage as the four pillars of trustworthy AI. A partner who can speak fluently to these pillars — rather than only to model accuracy — is far more likely to deliver a system that survives in production.

Identifying Red Flags in AI Consultancy

Be cautious of providers who showcase only model benchmarks while glossing over data pipelines, access control, and compliance. Other warning signs include unclear ownership of code, models, and data; vague answers about evaluation methodology; and reluctance to discuss long-term monitoring. If a vendor cannot describe how they will handle drift, retraining, or incident response, you are looking at a future production headache.

- Leads with KPI and problem definition

- Has documented MLOps and deployment processes

- Clearly assigns code and model ownership to your team

- Discusses drift, retraining, and incident response upfront

- References NIST AI RMF or equivalent governance frameworks

- Embeds senior practitioners, not junior subcontractors

- Showcases only benchmark scores, not production metrics

- Vague or evasive on data security and access controls

- Unclear IP ownership terms in the contract

- No defined process for evaluation or bias testing

- Reluctant to commit to post-deployment support

- Offers a single large contract before a scoped POC

AI Consulting vs. AI Development Services: Defining the Scope

AI consulting focuses on strategy, feasibility, and ROI modeling — answering whether an initiative is worth pursuing. AI development services focus on building, integrating, deploying, and maintaining the system that delivers that ROI. Most enterprises need both: a short, sharp consulting phase to define KPIs and data readiness, followed by a development engagement that owns delivery end-to-end.

This is where partnering on end-to-end software development becomes critical. Technical advisors should lead the project lifecycle from Discovery through deployment, ensuring that strategy, engineering, and operations remain aligned. The handoffs between phases — where consulting recommendations meet engineering reality — are exactly where most projects lose momentum.

When consulting and development are handled by different teams, critical context is lost at the handoff. The most successful engagements use a single team with both strategic and engineering depth — someone who can draw the architecture diagram in the morning and review the pull request in the afternoon.

Why Do Enterprise AI Projects Often Fail?

Industry data and audits consistently point to the same culprits: poor problem framing, unclear KPIs, fragmented data, and the MLOps Gap between a working model and a working system. Many projects also fail because organizations underestimate the operational discipline required — governance, monitoring, retraining, and incident response are not optional extras.

Bridging the Data Readiness Gap

Before writing a single line of model code, audit your proprietary data. Is it labeled, accessible, governed, and representative of the production environment? Privacy-related risks in data repositories are well documented, and they affect both compliance and model reliability. A data audit catches these issues early, when they are still inexpensive to fix — before a poorly labeled training set becomes the ceiling on your model’s accuracy.

Mistakes in Productionization

Models that perform beautifully in a notebook often collapse in production due to latency constraints, cost spikes, integration mismatches, or undetected drift. Productionization requires explicit engineering for throughput, observability, and rollback. Without it, you ship a science experiment, not a product. Teams that skip this stage routinely discover their issues only after real users are impacted — the worst possible time to find them.

Research consistently shows that the majority of enterprise ML models never reach production. Of those that do, a significant portion are decommissioned within twelve months due to performance degradation, integration failure, or poor adoption — all issues that disciplined MLOps and user-centric design can prevent.

The Full Cycle of AI Development Services

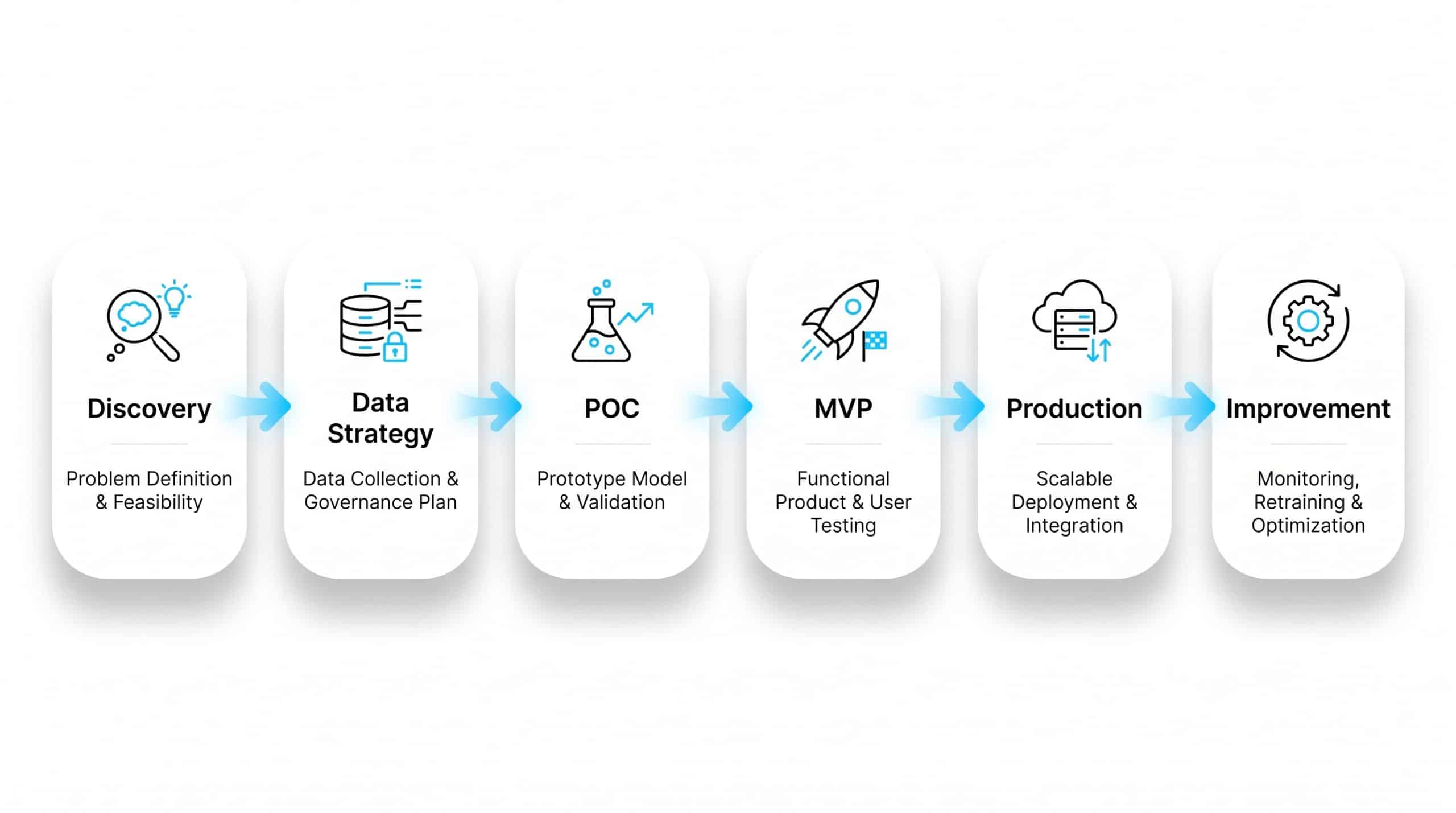

A mature delivery cycle progresses through Discovery, Data Strategy, POC, MVP construction, Production Deployment, and Continuous Improvement. Each stage has its own deliverables: a use case scorecard at Discovery; a data inventory and labeling plan at Data Strategy; a baseline model and evaluation report at POC; an integrated MVP with security review; a production deployment with full MLOps; and an ongoing improvement loop driven by monitoring.

Data labeling and model monitoring are not optional. Labeling determines the ceiling of model quality, while monitoring catches performance drift before it impacts users. Skipping either stage is the fastest path to silent failure — where the system appears to work but has quietly degraded to the point of producing unreliable outputs that no one has formally measured.

Process Map: From Discovery to Continuous Improvement

| Stage | Primary Goal | Key Deliverable | Success Signal |

|---|---|---|---|

| Discovery | Frame the business problem | Use case scorecard + KPI | Measurable target agreed |

| Data Strategy | Assess and prepare data | Data inventory + labeling plan | Data quality benchmarks met |

| POC | Validate technical feasibility | Baseline model + evaluation | Beats baseline on KPI |

| MVP | Integrate into workflows | Working system + security review | Used by real users |

| Production | Deploy with reliability | MLOps pipeline + monitoring | SLA targets achieved |

| Improvement | Refine and adapt | Retraining + drift reports | KPI improves over time |

Understanding Custom AI Development vs. Off-the-Shelf Tools

Custom AI development is the right choice when your problem depends on domain-specific data, proprietary workflows, strict compliance, or a competitive differentiator that off-the-shelf products cannot provide. Off-the-shelf tools shine for general-purpose, non-critical automation where speed of adoption matters more than precision or differentiation.

The decisive question is ownership. With custom development, your organization owns the data pipeline, the model behavior, and the integration logic. With off-the-shelf tools, you rent capability — convenient, but constrained. Most enterprises end up with a portfolio: off-the-shelf for commodity tasks, custom for the workflows that drive real business value.

If an AI capability is central to your competitive advantage — your pricing engine, your risk model, your recommendation logic — it should be custom. If it is a commodity function available in any SaaS tool, off-the-shelf wins on cost and speed. The clarity of that line is one of the first things a good Discovery engagement should establish.

When to Leverage Generative AI and LLMs

Generative AI is well suited for text summarization, content synthesis, advanced extraction, knowledge assistants, and natural-language interfaces over structured systems. Classical ML, by contrast, remains the right tool for forecasting, ranking, anomaly detection, and decisioning over structured data. The two are complementary, not competing.

The strongest AI portfolios mix both: ML handles quantitative prediction with interpretable performance metrics, while GenAI handles unstructured language and content. Choosing the right tool per use case is more important than picking a favorite technology — and a partner who can reason across both paradigms will save your team from costly wrong turns early in the engagement.

Why RAG is Essential for Enterprise GenAI

Retrieval-Augmented Generation (RAG) grounds an LLM’s answers in your secure, proprietary data. Instead of relying on whatever the model memorized during training, RAG retrieves the most relevant internal documents at query time and instructs the model to answer based on them. This dramatically reduces hallucinations and ensures answers reflect current, authoritative sources.

For enterprises, RAG is also a governance enabler. Because retrieval respects access controls, you can ensure users only see content they are authorized to access — aligning with data protection guidance such as that published by the ICO on AI and data protection.

Architecting RAG for Accuracy

A solid RAG architecture relies on clean ingestion, high-quality embeddings, a tuned vector database, a retrieval-and-rerank pipeline, and explicit citation of sources. Quality is measured through groundedness, precision, and recall — not just user satisfaction. Without these metrics, “it feels accurate” becomes the only KPI, which is not defensible for production. Investing in evaluation infrastructure at the RAG layer pays dividends every time the system is updated or extended.

Architecting AI Agents for Workflow Automation

An AI agent is not a chatbot. Agents take goals, plan steps, call APIs, update systems of record, and complete multi-step tasks. They can open service tickets, update CRMs, generate reports, or orchestrate operational workflows. The value emerges when repeatable processes with well-defined decisions are automated end-to-end — reducing cycle time and freeing your team to focus on work that requires human judgment.

Agents managing business data must operate under explicit safety controls: scoped tools, permission boundaries, action logs, rate limits, and human-in-the-loop checkpoints for sensitive operations. Skipping these controls turns a productivity tool into an operational risk. The governance architecture for agents is not an afterthought — it should be designed before the first API call is wired up.

Common Mistakes Teams Make with AI Agents

Teams often deploy agents with broad permissions, no audit trail, and no fallback path when the agent fails. Another frequent mistake is launching an agent before the underlying process is well understood — automation amplifies clarity, but it also amplifies confusion. Map the human workflow first, identify the deterministic steps, and let the agent handle the rest under supervision.

- Scope tool permissions to the minimum required

- Log every action with a full audit trail

- Define fallback paths for every failure mode

- Require human-in-the-loop for high-stakes actions

- Test with adversarial inputs before production

- Granting admin-level API access by default

- No monitoring for agent loops or stuck states

- Automating a poorly understood human process

- No rate limiting on external API calls

- Skipping incident response planning pre-launch

Measuring ROI and Project Success

Define ROI before development begins. Set a business KPI — time-to-resolution, operational cost savings, error reduction, revenue uplift — and translate it into model-level metrics: precision, recall, latency, cost per inference. Establish a baseline so you can prove the AI system actually moved the needle rather than simply being deployed.

Phased rollouts and A/B testing give you defensible evidence of impact. Just as importantly, include adoption metrics — if users do not actually use the system, model accuracy is irrelevant. The OECD’s analysis in Artificial Intelligence in Society highlights that real-world AI value depends on adoption, trust, and integration into existing processes — not on technical performance alone.

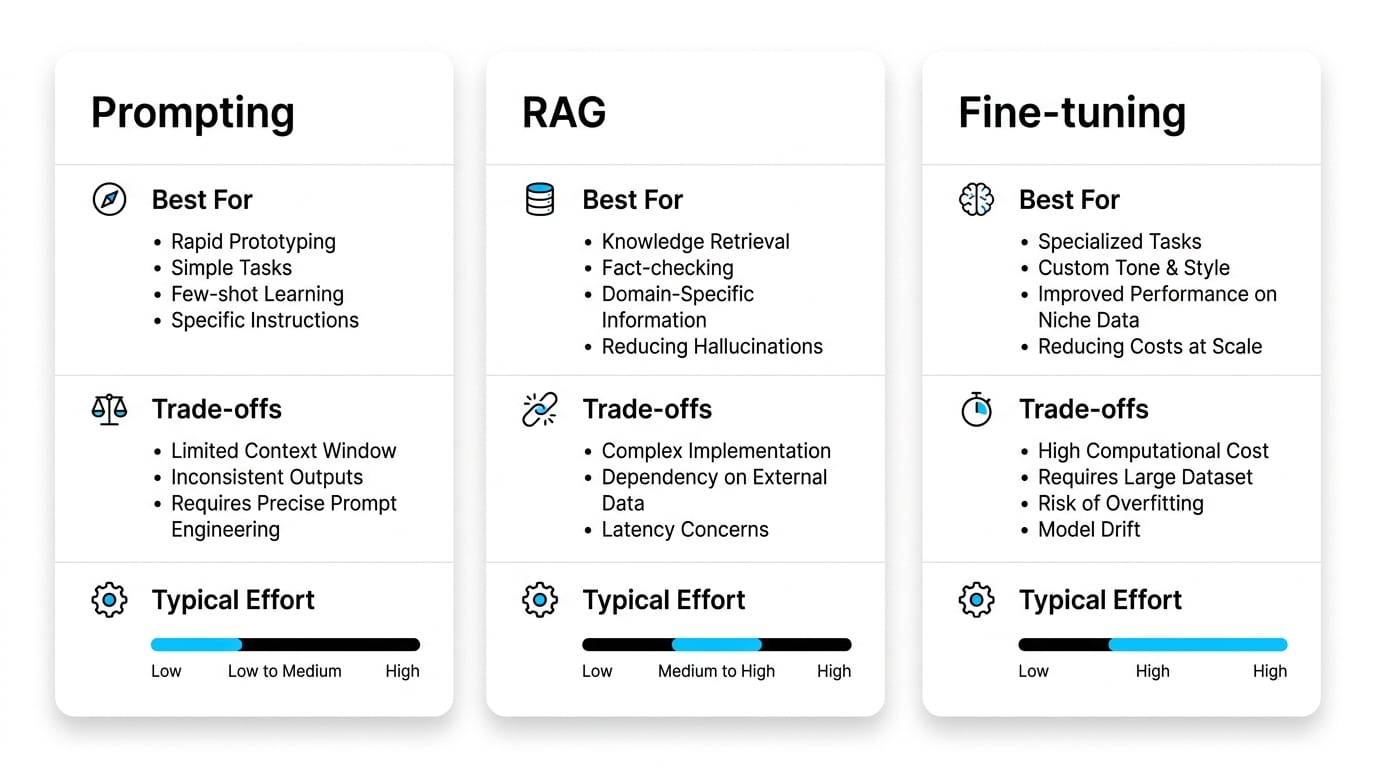

Comparing Decision Approaches: Prompting vs. RAG vs. Fine-tuning

| Approach | Best For | Trade-offs | Typical Effort |

|---|---|---|---|

| Prompting | Quick experiments, behavior tweaks | Limited control, prompt drift over time | Low |

| RAG | Dynamic knowledge, current proprietary data | Requires retrieval infrastructure and tuning | Medium |

| Fine-tuning | Consistent style, specialized domain behavior | Training cost and ongoing maintenance burden | High |

Many enterprise systems combine prompting for rapid iteration, RAG for grounding answers in live internal data, and fine-tuning for consistent tone or domain-specific reasoning. Starting with prompting and layering RAG before committing to fine-tuning is the most cost-efficient progression for most teams.

Managing Data Privacy and Security in AI Solutions

Privacy-by-Design means embedding controls into the architecture rather than bolting them on later. The essentials include data classification, role-based access management, encryption at rest and in transit, comprehensive audit logs, and explicit data retention policies. For systems trained or grounded on personal data, governance must extend across the full lifecycle — collection, processing, storage, and deletion.

A consulting-led approach to compliance ensures that AI initiatives align with applicable data protection requirements, including governmental and sector-specific standards. This is another area where a boutique partner adds value: tailored guidance for organizations operating under specific regulatory regimes, rather than generic compliance playbooks that were written for someone else’s business.

The Role of MLOps in Long-Term Value

MLOps is the backbone of any AI system that needs to live longer than a quarter. Deploying a model is just the starting line; what determines long-term value is automated retraining, robust logging, performance drift monitoring, version control for both data and models, and rollback capability. Without MLOps, every change becomes a risk and every issue becomes a fire drill.

This is precisely where dedicated, embedded engineering teams — the kind of partnership Sentice provides — pay back their cost many times over. Senior MLOps practitioners build the infrastructure that lets your data scientists iterate safely, your security team sleep at night, and your product team ship updates with confidence. The operational investment compounds: each improvement to the pipeline makes the next model update faster and safer.

Future-Proofing Your AI Strategy

The AI landscape changes monthly. Future-proofing means building modular architectures where models, retrieval layers, and integrations can be swapped without rebuilding the entire system. Abstract your model provider behind an interface, keep your data pipelines portable, and version everything — prompts, embeddings, model weights, and evaluation sets.

Increasingly, the most valuable systems are agentic workflows that adapt as the business evolves. By investing in modularity now, you ensure that next year’s model improvements become an upgrade, not a rewrite. The OECD AI Principles reinforce this view, emphasizing trustworthy, adaptable systems built for long-term operation rather than short-term wins.

Mapping Business Needs to Practical Capabilities

| Business Need | How a Boutique AI Partner Helps |

|---|---|

| Scaling tech capacity without losing speed | Embedded senior teams aligned to your roadmap and culture |

| Moving from pilot to production | End-to-end MLOps and integration expertise across the full SDLC |

| Protecting sensitive data | Privacy-by-Design and tailored security controls from day one |

| Measuring real ROI | KPI-driven Discovery and phased rollout planning with defined baselines |

| Adapting to evolving AI technology | Modular architectures and agentic workflow design built for change |

Frequently Asked Questions

What is the difference between AI development services and traditional software development?

Traditional software is deterministic — same input, same output. AI systems are probabilistic and depend heavily on data quality, requiring evaluation pipelines, monitoring, and retraining loops that traditional software does not need. This makes the engineering discipline around AI fundamentally different: you are not just shipping code, you are shipping a system that must be continuously measured and maintained against a performance target.

How long does it take to deploy a production-grade AI system?

A POC can be ready in weeks, but a hardened production system typically takes several months because of data preparation, integrations, security reviews, and MLOps setup. Timelines shrink significantly when data is already clean, well-labeled, and accessible, and when KPIs are clearly defined before engineering begins.

Do we need our own data science team to work with an AI development partner?

Not necessarily. A strong partner can deliver end-to-end. However, having an internal product owner or data owner accelerates Discovery and ensures the system aligns with your business reality. Over time, a good partner will also help your internal team build the capabilities to maintain and extend the system independently.

How do we choose between RAG, fine-tuning, and prompting for our use case?

Use RAG for dynamic knowledge where answers must reflect current internal data. Use fine-tuning for consistent style, tone, or specialized domain behavior that needs to be baked into the model. Use prompting for fast iteration and experimentation. Many enterprise systems combine all three, starting with prompting and layering RAG before committing to the cost and maintenance overhead of fine-tuning.

What are the main risks of enterprise AI deployment?

The top risks include hallucinations in generative systems, data leakage through improperly scoped retrieval, model drift degrading performance over time, biased outputs from unrepresentative training data, and poor adoption due to poor user experience or misalignment with actual workflows. Frameworks like the NIST AI Risk Management Framework help systematically map and manage these risks before they reach production.

Can AI development services support regulated industries such as finance and healthcare?

Yes, when the partner builds with Privacy-by-Design, encryption at rest and in transit, comprehensive audit logging, and explicit alignment to applicable regulatory standards. Regulated industries also benefit from explainability requirements — understanding why a model made a decision — which should be part of the architecture from the outset, not added later.

How is AI project success measured beyond model accuracy?

Success is measured through business KPIs — cost reduction, revenue impact, time saved — as well as adoption metrics, system reliability indicators such as latency and uptime, and governance maturity including audit trail completeness and incident response readiness. Model accuracy is a necessary condition, but it is not sufficient on its own to demonstrate that an AI system has delivered value.

When does it make sense to start small with AI rather than committing to a large engagement?

Starting small — with a scoped, time-boxed POC — almost always makes sense when data readiness is uncertain, when the business case has not yet been validated, or when the team is new to AI delivery. A well-run POC de-risks the larger investment and gives you concrete evidence to bring to stakeholders. At Sentice, we build POCs designed to graduate into production — so the work is never throwaway.