Software QA Services: The Complete Guide for Startups and Scaleups

Understand what modern QA services deliver, how to choose the right engagement model, and how a boutique tech partner helps you ship with confidence.

- QA is a strategic SDLC discipline, not a final checkpoint — when done right, it accelerates velocity rather than slowing it down.

- The difference between QA and testing matters: QA prevents defects upstream, while testing-only confirms what was already built.

- Outsourced QA gives scaleups immediate access to specialized skills — automation, performance, security — without long hiring timelines.

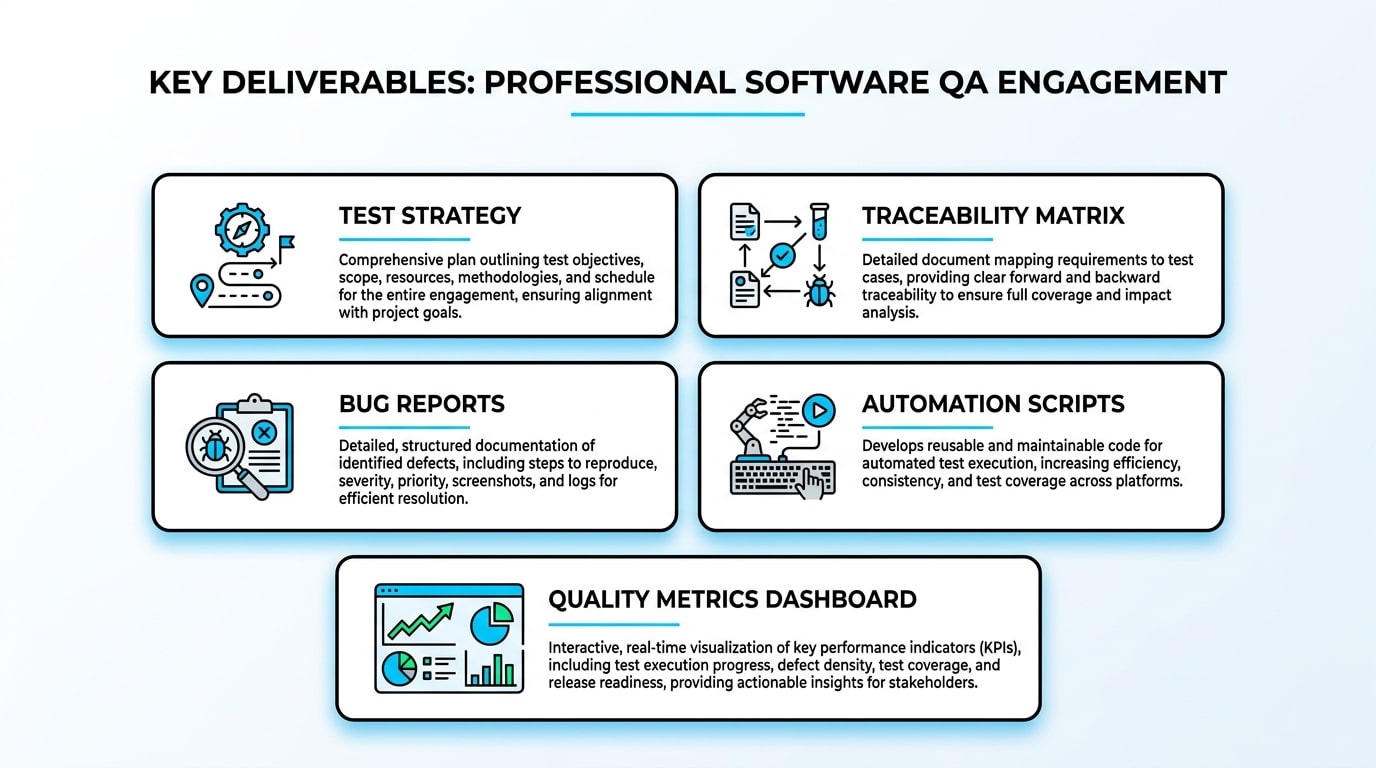

- Professional QA partners produce tangible artifacts: test strategies, traceability matrices, automation suites, and quality metrics dashboards.

- Choosing the right engagement model — dedicated team, managed QA, or augmentation — depends on how much ownership you want to retain.

- Involving a QA lead during requirements and design is consistently the cheapest and most effective way to reduce defects and late-stage rework.

Table of Contents

- What are Software QA Services and Why Do They Matter?

- How Does Software QA Differ from Software Testing?

- Why Are Scaleups Choosing QA Outsourcing Over Internal Hiring?

- What Deliverables Should You Expect from a Professional QA Partner?

- Comparing Engagement Models: Dedicated Teams vs. Managed QA

- Which Types of Testing Are Essential for Modern Software?

- Scaling Quality Through Test Automation

- Which KPIs Actually Measure QA Success?

- What Does a Strong Onboarding Process Look Like?

- Common Mistakes Teams Make When Buying QA Services

- How Do You Ensure Data Security in Outsourced QA?

- How Sentice Aligns with Scaleup Realities

- When Is the Right Time to Bring in QA?

- Frequently Asked Questions

In today’s fast-moving software landscape, shipping reliable products at speed has become one of the hardest balancing acts for startups and scaleups. Engineering teams are pressured to release weekly, sometimes daily, while user expectations for stability, performance, and security keep climbing. This is exactly where software QA services step in — not as a final checkpoint, but as a strategic discipline that protects velocity. When you partner with the right provider, QA becomes less about catching bugs at the end and more about preventing them across the full development lifecycle.

The goal of this guide is to help you — whether you are a CEO, CTO, or Tech Lead — understand what modern QA services actually deliver, how to choose the right engagement model, and how a boutique tech partnership with Sentice can quietly become the difference between a product that scales and one that stalls. We cover everything from engagement models and KPIs to automation strategy and data security, so you can make an informed decision with confidence.

What are Software QA Services and Why Do They Matter?

Software QA services are a structured methodology for ensuring that a product reaches the market in a mature, reliable, and predictable state. They go far beyond running test cases. A professional QA engagement defines test strategy, builds coverage across functional and non-functional areas, manages defect triage, and produces metrics that leadership can act on.

For scaleups, this matters because every regression that reaches production costs engineering time, customer trust, and revenue. Quality assurance, done right, is an accelerator: it lets your team release faster because confidence in the build is higher. Instead of treating QA as a cost center, modern engineering organizations treat it as an investment in throughput — where each prevented defect translates directly into more roadmap capacity for the features that actually move the business forward.

Teams with mature QA practices consistently report higher deployment frequency and lower change failure rates — two of the four core DORA metrics that correlate with elite engineering performance. QA is not the brake; it is what makes high-speed delivery sustainable.

How Does Software QA Differ from Software Testing?

This is one of the most common points of confusion among growing teams. Software testing is the execution layer — the act of running scenarios against the product to surface defects. QA, on the other hand, is the broader discipline that defines how quality is built into every stage of the SDLC, from requirements review to release readiness.

Academic programs consistently frame QA as a process-oriented function that includes verification, validation, and quality standards, while testing sits inside that framework as one set of activities. The practical impact is significant: a QA-led engagement reduces defects upstream, while a testing-only engagement only confirms what was already built — often too late to influence direction or avoid costly rework.

A process discipline covering the full SDLC — from requirements review through release readiness — with the goal of building quality in at every stage, not auditing it out at the end.

The execution layer within QA — the act of running defined scenarios against the product to surface defects. Essential, but only one component of a mature quality function.

The practice of involving QA activities — review, analysis, test design — earlier in the lifecycle, where the cost of change is lowest and the impact on product direction is highest.

Why Are Scaleups Choosing QA Outsourcing Over Internal Hiring?

Building an internal QA function from scratch takes months. You need to hire test leads, automation engineers, performance specialists, and sometimes security testers. For most scaleups, the timeline simply does not match the roadmap. QA outsourcing solves this by giving you immediate access to specialized skill sets without the overhead of permanent hiring.

You can scale capacity up before a major release and scale down between cycles. You also gain exposure to engineers who have seen multiple products, multiple stacks, and multiple failure patterns. The result is a more flexible cost structure and a faster path to maturity. For founders managing shifting priorities, this elasticity is often the deciding factor between hitting a launch window and missing it.

Hiring a senior QA engineer from job post to productive contributor typically takes 8–14 weeks when accounting for sourcing, interviews, notice periods, and onboarding. A well-structured outsourced QA engagement can reach productive coverage within the first two sprints — often under three weeks from contract signature.

What Deliverables Should You Expect from a Professional QA Partner?

A serious QA engagement produces tangible artifacts, not vague status updates. You should expect a documented test strategy, a traceability matrix linking requirements to test cases, structured bug reports with reproduction steps and severity, automation scripts with maintenance notes, and recurring quality metrics dashboards. Industry guidance such as the NIST minimum standards for software verification outlines exactly the kind of evidence-based outputs that mature engineering organizations rely on — including automated testing, static analysis, and review documentation.

When evaluating a partner, ask to see anonymized samples of these deliverables. The quality of their reports tells you more about the partnership you are about to enter than any sales deck ever will. A partner who cannot show you a sample traceability matrix or a sample defect report is one who has not yet built the discipline your product deserves.

- Documented test strategy and scope

- Requirements traceability matrix

- Risk-based test prioritization

- Environment and data setup guide

- Release readiness checklist

- Structured bug reports with steps to reproduce

- Automation scripts with maintenance notes

- Regression and smoke suite results

- Quality metrics dashboard (per sprint or release)

- Post-release defect escape analysis

Comparing Engagement Models: Dedicated Teams vs. Managed QA

Choosing the right model depends on how much of the QA function you want to own versus delegate. A dedicated QA team becomes a stable extension of your internal workflow — embedded in your sprints, your tools, and your culture. A managed QA service is outcome-based: the partner owns the entire process, including planning, execution, tooling, and reporting.

The table below maps common business needs to the model that typically fits best, and shows where a partner like Sentice contributes through dedicated engineering teams that align with client culture from day one.

| Business Need | Recommended Model | What You Get in Practice |

|---|---|---|

| Long-term product with deep domain knowledge | Dedicated QA Team | Stable engineers embedded in your sprints, growing product expertise over time |

| Need to delegate full QA ownership | Managed QA Service | End-to-end strategy, execution, and reporting against agreed SLAs |

| Short-term spike before a major release | Project-Based Engagement | Defined scope, fixed timeline, focused regression and acceptance coverage |

| Hybrid in-house plus external capacity | Team Augmentation | External testers and automation engineers extending your internal lead |

Which Types of Testing Are Essential for Modern Software?

For most products, the non-negotiable categories are functional, regression, and API testing. Functional testing confirms that features behave as specified. Regression protects against unintended side effects when new code ships. API testing validates the contracts between services — which becomes critical the moment your architecture moves toward microservices.

As the product matures, you scale into performance testing to handle real user load, accessibility testing to meet compliance and inclusion goals, and security testing to protect sensitive data. The right partner integrates all of this into your end-to-end software development lifecycle, so QA is not a silo but a continuous quality layer from design through deployment.

Accessibility testing is increasingly a legal requirement, not just a best practice. WCAG 2.1 AA compliance is referenced in EU accessibility legislation and numerous national standards, and failure to meet it exposes products to regulatory risk — particularly for SaaS platforms serving enterprise customers.

Scaling Quality Through Test Automation

Manual testing is essential for exploratory work and new features, but it cannot keep pace with continuous delivery. Automation is what makes high-frequency releases sustainable. The right approach prioritizes automation for scenarios that are repeated often, carry high business value, and run against stable interfaces. Smoke suites in CI, regression packs, and critical user journeys are the highest-ROI candidates.

The biggest mistake teams make is automating too much too fast, producing brittle suites that break with every UI change. A disciplined partner builds maintainable, layered automation that survives product evolution and actually saves engineering time over the long run — instead of becoming another maintenance burden that erodes the confidence it was meant to build.

How to Prioritize Test Cases for Automation

Start with frequency and risk. Tests that run every release, cover revenue-critical paths, and target stable APIs deliver the fastest payback. Tests against rapidly changing UI elements or one-off scenarios should usually stay manual. The decision is economic, not technical: automation is worth it when the cost of writing and maintaining the script is lower than the cumulative cost of running it manually over its expected lifetime. A senior QA engineer can model this for your specific product in a single planning session.

- Critical user journeys (login, checkout, onboarding)

- API contract and integration tests

- Smoke suite running on every CI push

- Regression pack for stable core features

- Data validation and boundary conditions

- Exploratory testing of new or unstable features

- Rapidly changing UI flows and visual layout checks

- One-off edge cases with low recurrence

- Usability and UX perception testing

- Ad-hoc testing during active sprints

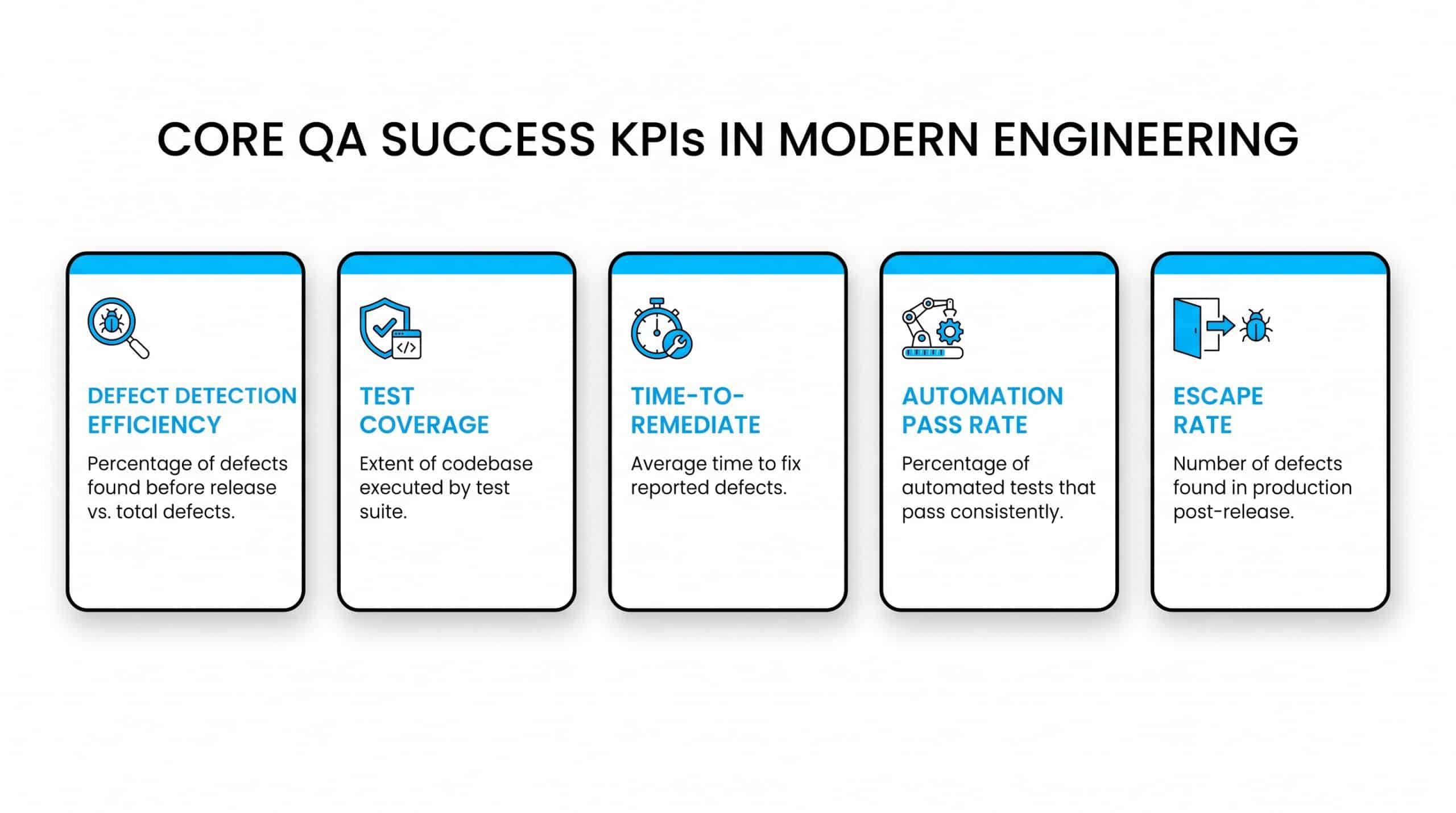

Which KPIs Actually Measure QA Success?

Counting bugs is the weakest possible metric. Mature teams track defect detection efficiency — the percentage of defects caught before production versus those found after. They measure test coverage across critical user flows, time-to-remediate from defect discovery to verified fix, automation pass rate stability, and escape rate per release.

Together, these metrics tell you whether quality is improving, holding, or degrading cycle over cycle. They also give leadership a defensible, data-driven view of QA performance — which is exactly what boards and investors want to see when evaluating engineering maturity in scaling companies. A partner who cannot produce these metrics on a regular cadence is not yet operating at the level your product requires.

| KPI | What It Measures | Target Direction |

|---|---|---|

| Defect Detection Efficiency (DDE) | % of defects found before production vs. after | Higher is better — target above 90% |

| Escape Rate per Release | Defects that reach production per release cycle | Trending downward over time |

| Time-to-Remediate | Hours from defect discovery to verified fix | Shorter — reduces release cycle drag |

| Automation Pass Rate Stability | % of automated tests passing consistently across runs | Above 95% — flaky tests are noise |

| Critical Flow Coverage | % of revenue-critical user journeys covered by automated tests | Higher — prioritize revenue paths first |

What Does a Strong Onboarding Process Look Like?

Onboarding is where partnerships succeed or fail. A strong process begins with discovery: understanding the product, users, business goals, and risk areas. Next comes environment setup — including access, test data, and tooling alignment. The team then establishes a baseline through smoke and regression coverage, followed by gradual scaling into deeper functional, API, and automation work.

Communication channels and SLAs should be agreed in writing before the first sprint. The first two to three weeks are typically structured as a pilot, giving both sides confidence before expanding scope. This staged approach prevents the classic failure mode of dropping a large external team into a complex product without context — a pattern that reliably leads to wasted effort, low coverage quality, and early disengagement.

By the end of week one, your QA partner should have documented the product’s risk areas, confirmed access to all test environments, agreed on defect severity definitions and triage SLAs, and delivered an initial smoke suite that runs in your CI pipeline. If none of those things have happened, the engagement is already behind.

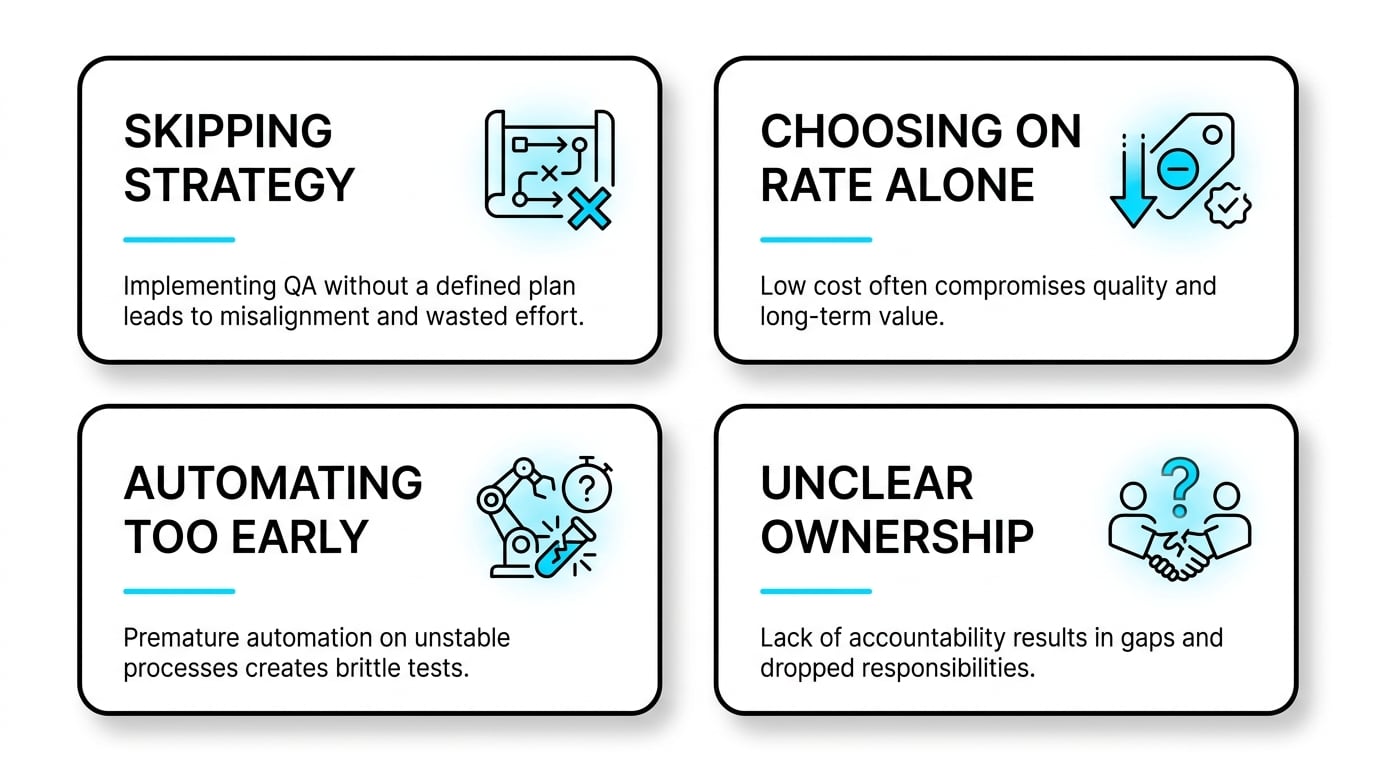

Common Mistakes Teams Make When Buying QA Services

Several patterns repeat across failed engagements. The first is treating QA as pure execution and skipping strategy — which leads to busywork rather than risk reduction. The second is choosing a partner purely on hourly rate, ignoring the cost of poor reporting and missed defects. The third is automating before the product is stable, producing fragile suites that need constant rework.

The fourth is unclear ownership, where neither the internal team nor the partner is accountable for release readiness. Avoiding these mistakes starts with treating QA selection as a strategic decision, not a procurement transaction, and aligning on outcomes rather than activity. Ask every candidate partner how they measure success, not just what they test.

- Skipping test strategy in favour of immediate execution — leads to low-value, redundant coverage

- Selecting on hourly rate alone — the hidden cost of missed defects far exceeds any rate saving

- Automating unstable interfaces — produces brittle suites that consume more time than they save

- Undefined release readiness ownership — creates gaps at the exact moment accountability matters most

- No agreed defect severity definitions — results in triaging arguments instead of fixes during crunch

How Do You Ensure Data Security in Outsourced QA?

Security is non-negotiable when external teams touch your systems. Best practice starts with isolating test environments from production, using anonymized or synthetic data wherever real user data is not strictly required, and enforcing role-based access controls. Contracts should include confidentiality clauses, defined data handling procedures, and clear breach notification timelines.

Engineers working on sensitive products should be onboarded with the same security training as internal staff. When these controls are built in from day one, outsourcing QA becomes no riskier than running it internally — and often more disciplined, because the boundaries are explicit rather than assumed. A mature partner will present their security framework proactively, not only in response to your questions.

Before engaging any external QA partner, confirm: isolated test environments with no production access, a data anonymization or synthetic data policy, NDA and confidentiality agreement in place, role-based access reviewed and revoked on offboarding, and a defined breach notification SLA. These are table-stakes, not premium add-ons.

How Sentice Aligns with Scaleup Realities

Scaleups operate under constraints that large enterprises rarely face: tight runways, shifting priorities, and the constant need to prove product maturity to customers and investors. The table below maps common scaleup needs to how a boutique partnership model addresses them in practice — without forcing you into a rigid template that was designed for a different kind of company.

| Business Need | How a Boutique Partner Helps in Practice |

|---|---|

| Need to scale QA capacity quickly before a release | Embedded engineers ramp inside your sprints rather than running parallel processes |

| Limited budget for full-time QA leadership | Senior QA expertise applied selectively where it changes outcomes, not uniformly across all coverage |

| Mixed manual and automation requirements | Layered strategy that starts manual, automates what is stable, and stays maintainable as the product evolves |

| Cultural fit with a small, fast-moving team | Aligned communication style and time zone overlap with your core engineering hours — culture-aligned from day one |

At Sentice, we think of ourselves as an extension of your team — not a vendor you manage at arm’s length. Our approach is built around the principle of starting small, growing fast: we earn the right to expand scope by delivering measurable quality improvement in the first few sprints.

When Is the Right Time to Bring in QA?

The honest answer is earlier than most teams think. Involving a QA lead during requirements and design catches ambiguity before it becomes code — which is the cheapest possible time to fix it. By the time a feature reaches staging, the cost of changing it has multiplied. For early-stage products, a lightweight QA presence focused on critical flows is usually enough.

As the product matures and the user base grows, the engagement deepens into automation, performance, and security. The mistake to avoid is waiting until quality problems are already visible to customers — by then, you are paying for both the fix and the reputational damage. Bringing QA in one sprint earlier than feels necessary is almost always the right call.

Research into defect cost models consistently shows that a defect caught during requirements costs roughly 1x to fix. The same defect caught during system test costs 10x. Found in production, it can cost 100x or more — when you factor in engineering time, customer impact, and brand erosion. The economics of shift-left QA are not theoretical; they show up directly on your engineering cost per feature.

Frequently Asked Questions

What is the main difference between an internal QA team and an external QA partner?

Internal teams build deep, persistent product knowledge but take longer to scale and require permanent investment. External partners bring immediate specialized skills, flexible capacity, and exposure to patterns from many products — with a faster ramp and a more elastic cost structure. Many scaleups run a hybrid model with an internal QA lead and an external partner handling execution and automation at scale.

How do project requirements influence the pricing of QA services?

Pricing is shaped by scope, product complexity, automation depth, compliance and security requirements, and release frequency. Products with frequent CI/CD releases need ongoing automation maintenance. Performance and security testing add specialized tooling costs. Wide device and browser matrices increase manual coverage hours. Clear scope and prioritized risk areas are the most effective way to keep pricing predictable and fair for both sides.

When is the earliest stage in the SDLC to involve a QA lead?

Ideally during requirements and design. A QA lead reviewing user stories early surfaces ambiguity, missing acceptance criteria, and risk areas before any code is written. This shift-left approach is consistently the cheapest way to improve quality and the most effective way to reduce late-stage rework. Even a part-time QA presence in planning sessions delivers measurable return within the first two sprints.

How can a company determine if their software is ready for full-scale automation?

Look for stability in the interfaces being tested, repeatable test scenarios, and a release cadence frequent enough to justify maintenance cost. If the UI or API is changing weekly, focus automation at the layers that are stable — typically API and core business logic — and keep volatile areas manual until they settle. Full-scale automation before stability is established produces brittle suites that consume more engineering time than they save.

How do you measure ROI on QA services?

Track defects prevented before production, reduction in time-to-remediate, automation hours saved versus manual execution, and the trend in production incidents over time. Combined, these show whether the engagement is reducing risk and freeing engineering capacity — which is the real return. A well-structured QA engagement typically pays for itself within two to three release cycles through reduced incident response cost alone.

What should a QA partner’s first sprint deliverable look like?

By the end of the first sprint, you should have a documented test strategy covering scope, risk areas, and tool choices; an initial smoke suite running in your CI pipeline; a defect log with at least one confirmed fix cycle completed; and agreed SLAs for defect triage and reporting. If none of these exist after sprint one, the engagement needs a structured reset before scope expands.

Is it possible to run QA services alongside an existing internal QA function?

Yes — and this is one of the most effective models. A team augmentation engagement places external automation engineers or performance testers alongside your internal QA lead, who retains ownership of strategy and release sign-off. The external team handles execution capacity, specialist skills, and tooling, while your internal lead focuses on product knowledge and stakeholder communication. Clear role boundaries from the start prevent duplication and keep the engagement cost-efficient.

How does outsourced QA affect the speed of the development cycle?

Done well, it accelerates it. When QA is embedded in the sprint and defects are caught early, developers spend less time context-switching back to fixed features and more time building new ones. Automation that runs on every CI push gives the team immediate confidence to merge and release. The net effect is a shorter cycle time and a higher deployment frequency — both core DORA metrics that signal engineering maturity to investors and enterprise customers alike.