MVP Development Services: A Complete Guide to Building, Scoping, and Validating Your First Product

Everything founders and innovation leaders need to plan, build, and measure an MVP that generates real validated learning.

- An MVP is a controlled experiment, not a lite product — its purpose is to validate your core hypothesis with real user behavior, not to ship a smaller version of your dream feature set.

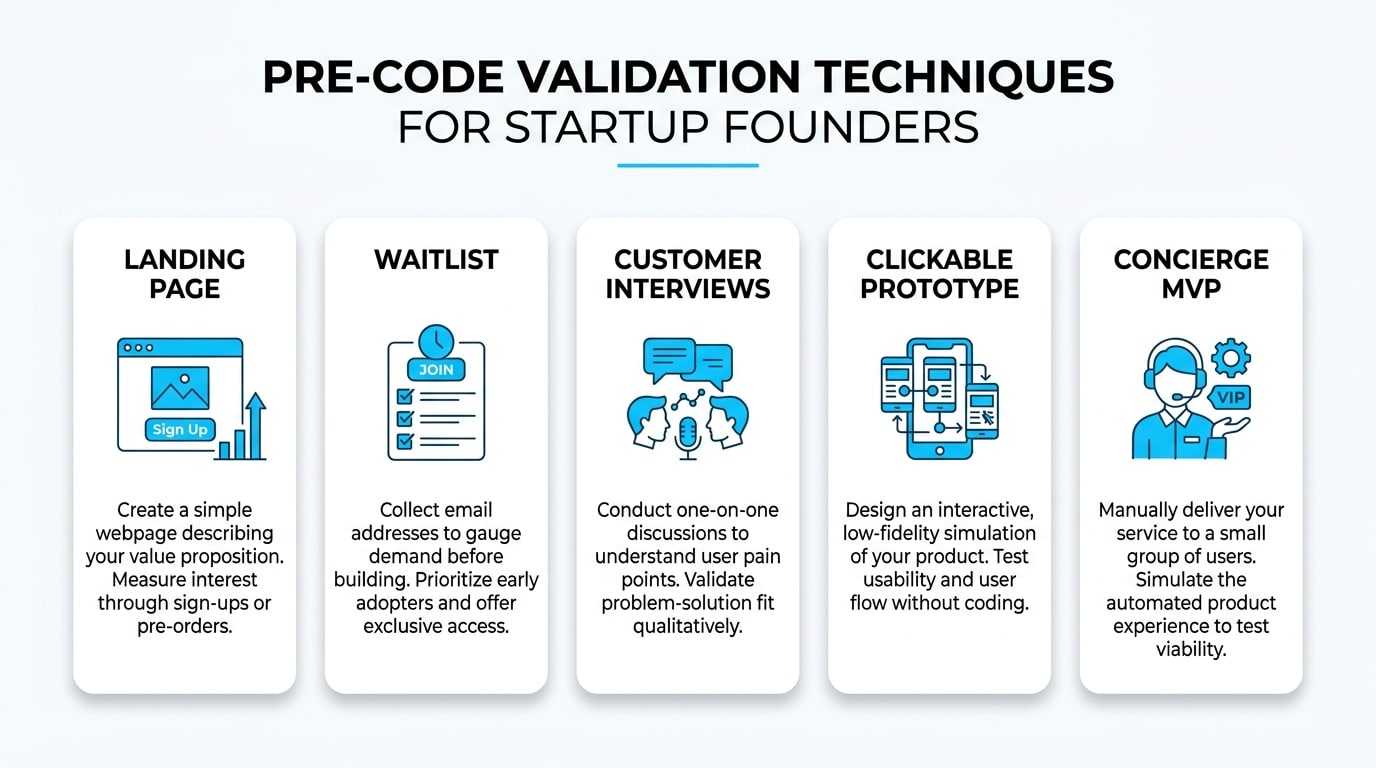

- Validation should begin before a single line of code is written, using landing pages, concierge tests, and targeted interviews to sharpen scope and reduce risk.

- Scope control is the single biggest driver of timeline and budget: filter every feature against one North Star Action before committing it to a sprint.

- A focused MVP typically takes six to sixteen weeks; the primary speed driver is clarity — clear hypothesis, clear decision-makers, clear sprint cadence.

- Post-launch success is measured by activation, retention, core feature usage, and conversion — not downloads or total signups.

- The right tech partner operates as an embedded extension of your team, bringing discovery, UX, engineering, QA, and analytics under one roof so you can move from vision to validated product together.

Table of Contents

- What are MVP development services and who is this for?

- What is a Minimum Viable Product (MVP) and what is its goal?

- How does a Startup MVP differ from a “Version 1”?

- When validation should happen before a single line of code

- How do you prioritize features without inflating scope?

- How long does it actually take to develop an MVP?

- How much do MVP development services cost and what affects the price?

- Fixed Price vs. Time and Materials for MVP development

- What exactly is included in MVP development services?

- What does a proper MVP workflow look like?

- How is MVP success measured post-launch?

- Common mistakes in MVP development and how to avoid them

- Must an MVP be scalable and secure from day one?

- Which technology stack is most suitable for an MVP?

- What should you prepare before contacting an MVP development agency?

- How Sentice helps your business move from idea to validated MVP

- How do you decide between iterating and rebuilding after the MVP?

- Frequently asked questions

For today’s founders and innovation leaders, the gap between a great idea and a market-ready product is where most ventures stumble. You have limited runway, a board asking for traction, and a market that won’t wait. The temptation to build everything at once is strong — but it’s also the fastest path to burning capital before learning if anyone actually wants what you’re building. This is where strategic MVP development services change the equation: instead of betting your budget on assumptions, you build a focused, functional product designed to validate your core hypothesis with real users.

In this guide, we’ll walk you through everything you need to know to plan, scope, build, and measure an MVP that gives your business a real shot at product-market fit. Whether you’re a pre-seed founder preparing your first raise or a corporate innovation team seeking rapid proof of concept, Sentice has helped teams across both categories move from vision to validated product — as a true extension of their team, end-to-end.

What are MVP development services and who is this for?

MVP development services are an end-to-end engagement designed to take a business idea from concept to a functional, deployable product that tests a clear market hypothesis. The deliverable is not a “lite version” of the final dream — it’s a sharp, intentional experiment built to generate validated learning. A trusted product development partner brings together discovery, UX, engineering, QA, and analytics under one roof so your team can move from vision to a market-ready technical solution without juggling vendors.

This model is built for three distinct audiences: ideation-stage startups that need to de-risk their first investment of time and capital; pre-seed founders preparing for their first raise who need something real to show investors; and corporate innovation teams that need rapid proof of concept before committing internal resources to a full build. Wherever you are in that spectrum, the underlying discipline is the same — build less, learn faster, decide earlier.

First-time founders who need to de-risk a market hypothesis and generate investor-ready evidence before committing to a full build.

Growing companies launching a new product line or adjacent feature set that needs validation before being woven into the core platform.

Corporate units that need a contained, fast-moving experiment to validate an internal or external product concept before seeking budget approval.

What is a Minimum Viable Product (MVP) and what is its goal?

A Minimum Viable Product is the smallest functional version of your product that can deliver real value to early users while producing meaningful data about their behavior. The goal is not to launch something “small” — it’s to run a controlled experiment that proves or disproves the assumptions your business depends on. Think of it as your first scientific test: you define the hypothesis, build only what’s needed to test it, and let real usage tell you whether to persevere, refine, or pivot.

Done well, an MVP shifts decision-making from opinion to evidence. Rather than debating what users might want in a boardroom, you put a working product in front of real people and observe what they actually do. That behavioral data is worth far more than any survey, persona document, or market research report — because it reflects genuine intent, not stated preference.

An MVP is not about shipping something imperfect. It’s about shipping something intentionally scoped to answer one critical question about your business — and doing so before your runway runs out.

How does a Startup MVP differ from a “Version 1”?

This distinction trips up many founders. A Startup MVP is a validation tool focused on one question: does the core problem-solution fit exist? A Version 1 product, by contrast, assumes that fit is already proven and shifts the focus to growth, polish, and scale. An MVP might tolerate manual workflows, a basic UI, and limited edge-case handling — because the goal is learning, not perfection. A V1 wouldn’t accept those trade-offs, because by that point you owe your users a reliable, polished experience.

Confusing the two leads to over-investment in features that don’t yet matter and under-investment in the data you actually need. Teams that treat their MVP like a V1 almost always over-engineer, over-design, and over-build — arriving at launch with a heavier product, a lighter bank account, and less time to iterate before their next funding milestone.

- One hypothesis to test

- Minimal, functional UI

- Manual workarounds allowed

- Instrumentation over polish

- Speed to learning is the priority

- Fit is proven; now grow

- Polished, reliable UX

- Edge cases handled

- Scalability built in

- Speed to market is the priority

Scenario: when validation should happen before a single line of code

Imagine a founder with a vision for a new B2B platform. Before committing six months of development, the smarter move is to test demand through a landing page, targeted outreach, or a “concierge” version where the service is delivered manually behind the scenes. If those experiments generate signal — sign-ups, paid pilots, or strong qualitative feedback — the MVP scope becomes sharper and far less risky. Validation isn’t a phase that comes after development; it’s a discipline that runs alongside it from day one.

What kinds of validation exist before writing code?

Landing pages with clear value propositions, waitlists, paid ad tests, founder-led customer interviews, clickable prototypes, and concierge MVPs where you deliver the value manually are all proven pre-code validation tools. Each technique reduces uncertainty and refines what your MVP must actually do — meaning the engineering work that follows is tighter, faster, and grounded in genuine market signal rather than assumption.

What kinds of validation should be done post-launch?

Activation rates, retention curves, core feature usage, conversion to paid, and qualitative feedback from active users are the post-launch signals that matter. The combination of quantitative data and direct user conversations is what turns a launched MVP into an actionable roadmap. This iterative learning loop — build, measure, learn, repeat — is the engine that drives product-market fit rather than hoping to stumble into it.

How do you prioritize features without inflating scope?

Start by defining your North Star Action — the single user behavior that proves your product delivers value. Then build only the flow that gets a user from sign-up to that action and back again. Every other feature gets parked in the backlog, not deleted — just deferred until the core loop is validated. This isn’t about being minimalist for its own sake; it’s about protecting your ability to learn before your runway runs out.

Sentice teams apply this filter actively during discovery, helping founders separate “must-have for validation” from “nice-to-have for scale.” It’s one of the most valuable conversations that happens before a single line of code is written, because it shapes every sprint that follows. Teams that arrive at development with a clear North Star Action consistently ship faster and spend less time reworking features that users don’t actually need at this stage.

What is scope control and why is it critical?

Scope control is the discipline of saying “not yet” to features that don’t serve your current learning goal. Without it, timelines slip, budgets leak, and the MVP becomes a watered-down V1 that proves nothing. The most effective way to maintain scope control is to document the North Star Action in writing, get every stakeholder to sign off on it, and require a formal justification before any new feature is added to the sprint.

For every proposed feature, ask: “Does this help a user reach the North Star Action, or does it help us measure whether they got there?” If the answer to both is no, the feature doesn’t belong in the MVP.

How long does it actually take to develop an MVP?

A focused MVP typically takes between six and sixteen weeks, depending on scope, integrations, and how prepared the founding team is when development starts. Speed comes from clarity: a clear hypothesis, a clear North Star Action, clear decision-makers, and a sprint cadence with regular demos. Teams that can answer questions and make decisions within hours rather than days routinely cut weeks off their timeline — not because the engineering is faster, but because the friction that slows most projects (waiting for approvals, unclear acceptance criteria, late-stage scope changes) is eliminated before it accumulates.

Which factors tend to increase development time?

Complex payment or compliance integrations, role-based permission systems, heavy data migrations, frequent direction changes without proper change control, and slow stakeholder feedback loops are the most common timeline killers. Each adds friction that compounds across sprints. A discovery phase that surfaces these risks upfront — before development begins — is the most effective way to keep the build phase on track.

How much do MVP development services cost and what affects the price?

Cost is driven by scope, UX complexity, third-party integrations, security and compliance requirements, and delivery speed. Rather than pricing “per screen,” experienced teams price by deliverables: discovery, UX/UI, engineering, QA, DevOps, analytics, and post-launch support. Industry analysis of custom software development engagements consistently highlights that integrations and design depth, not headcount alone, move the budget needle.

Which cost components are often overlooked by founders?

Analytics instrumentation, infrastructure monitoring, automated testing, and post-launch iterations are routinely underestimated or excluded from initial quotes. These aren’t optional add-ons — they’re what turn a launched product into a learning machine. An MVP without analytics is a product you launched but can’t learn from, which defeats the entire purpose of building it.

How can you avoid budget surprises?

Define deliverables explicitly, set milestone-based payments, and agree on a written change control process before development starts. Surprises usually come from undocumented assumptions, not bad intentions. Any engagement that lacks a written scope of work and a defined change request process should be approached with caution, regardless of how promising the initial conversations feel.

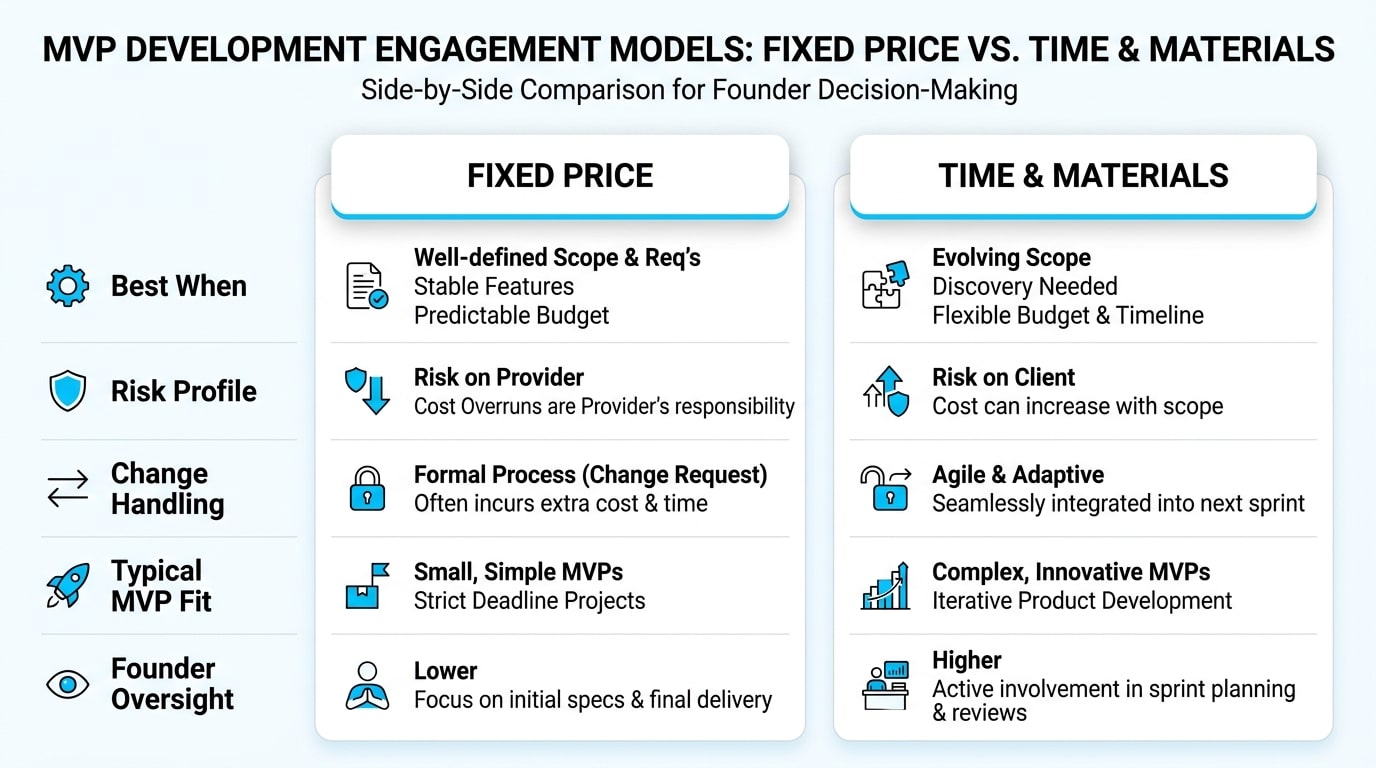

Comparison: Fixed Price vs. Time and Materials for MVP development

This is one of the most common decisions founders face when engaging an MVP development partner. The right model depends on how much certainty you have about scope and how much you expect to learn — and therefore change — during the build itself.

| Dimension | Fixed Price | Time and Materials |

|---|---|---|

| Best when | Scope is well-defined and stable | Scope will evolve based on user feedback |

| Risk profile | Lower budget risk, higher scope rigidity | Flexible scope, requires active budget governance |

| Change handling | Formal change requests with cost impact | Continuous reprioritization within sprint |

| Typical fit for MVP | Discovery sprint or narrow pilot | Iterative build phase |

| Founder oversight | Lower day-to-day involvement required | Higher involvement via demos and backlog reviews |

When does a Fixed Price model work effectively?

Fixed price works well when the discovery phase has produced a tight, documented scope, the team has prior experience with the technology stack, and genuine uncertainty is low. It provides budget predictability that some founders and CFOs prefer — but it requires you to resist the temptation to change direction mid-build without going through the formal change request process.

How can you protect yourself when using Time and Materials?

Set a budget cap per sprint, require a working demo at the end of each cycle, and tie continued investment to KPI movement rather than feature completion. This keeps the flexibility of T&M without losing the accountability that keeps projects on track. A shared, transparent backlog reviewed weekly is the most effective control mechanism in a T&M engagement.

What exactly is included in MVP development services?

Strong deliverables make the difference between a project you can build on and one you’ll need to redo. At minimum, expect a written scope and hypothesis document, user flows and UI screens, production-ready code, deployment to a live environment, analytics instrumentation, monitoring, and a prioritized roadmap for the first iteration cycle. Anything less and you’ve bought a demo, not a learning platform — and demos don’t generate the behavioral data you need to make your next funding or product decision.

What are the key deliverables in the Discovery phase?

Validated hypotheses, target audience profiles, user journey maps, a prioritized feature list aligned to the North Star Action, and a short-term roadmap tied to business KPIs. The discovery phase is where a responsible partner earns your trust by asking hard questions, challenging assumptions, and producing a scope that the entire team can hold each other accountable to.

What comes out of the Build and Launch phase?

Source code in version-controlled repositories, automated tests covering critical paths, CI/CD pipelines, deployment to a production environment, monitoring dashboards, basic technical documentation, and analytics events that map directly to your success metrics. These aren’t bonuses — they’re the baseline for a professional engagement.

What does a proper MVP workflow look like?

The workflow is cyclical, not linear: Define Hypothesis, Build Minimum, Launch, Measure, then Iterate or Pivot. Each cycle should produce a tangible learning, not just a feature release. Sentice teams operate as an embedded extension of your team, running this loop in two-week sprints with shared backlogs, joint demos, and transparent decision logs — so founders always know what’s being built, why, and what it’s teaching the business.

What happens during a Discovery Sprint?

Alignment on business goals, KPI definition, hypothesis prioritization, scope finalization, and a delivery plan with named milestones and explicit acceptance criteria. A discovery sprint typically runs one to two weeks and produces the documents that govern every subsequent build sprint. Skipping it almost always costs more time and money than it saves.

How does the Development and QA phase function?

Engineering builds against user stories with clear acceptance criteria written by the product owner. QA validates each story against those criteria before it’s marked complete. The product owner reviews progress at sprint demos, and any scope changes go through the agreed change control process rather than being absorbed informally. This discipline is what keeps the build phase predictable.

What happens during the Launch and Measure cycle?

Controlled release to early users, monitoring of activation and retention from day one, qualitative interviews with active and churned users, and a structured decision review that drives the next sprint’s priorities. The output is not just metrics — it’s a documented decision about whether to persevere on the current hypothesis, refine specific elements, or pivot to an adjacent opportunity.

Teams that instrument their MVP with analytics before launch consistently make their first significant product decision two to four weeks faster than teams that add measurement as an afterthought. Instrumentation is not a post-launch task.

How is MVP success measured post-launch?

Success is measured by behavior, not buzz. The metrics that matter are activation (did users reach the North Star Action?), retention (did they come back?), core feature usage (are they using what was built?), and conversion (are they willing to pay or commit?). Traffic and downloads alone tell you nothing about whether your product solves a real problem for real people — they tell you only that you’re good at marketing, which is a different skill entirely.

What are some KPI examples by product type?

For B2B products: demo requests, pilot conversion rates, time-to-value (how quickly a new account reaches their first meaningful outcome), and weekly active accounts. For B2C products: Day 1 and Day 7 retention rates, session frequency, task completion rates within the core flow, and free-to-paid conversion. The right KPIs are the ones tied to a business decision you will actually make based on the result.

What is a vanity metric and how do you avoid it?

Vanity metrics are numbers that look impressive but don’t correlate to value delivered — total signups without activation, page views without engagement, downloads without retention. Avoid them by asking, for every metric on your dashboard: “What decision will I make differently if this number is higher versus lower?” If the answer is “none,” it’s a vanity metric and it doesn’t belong on your review agenda.

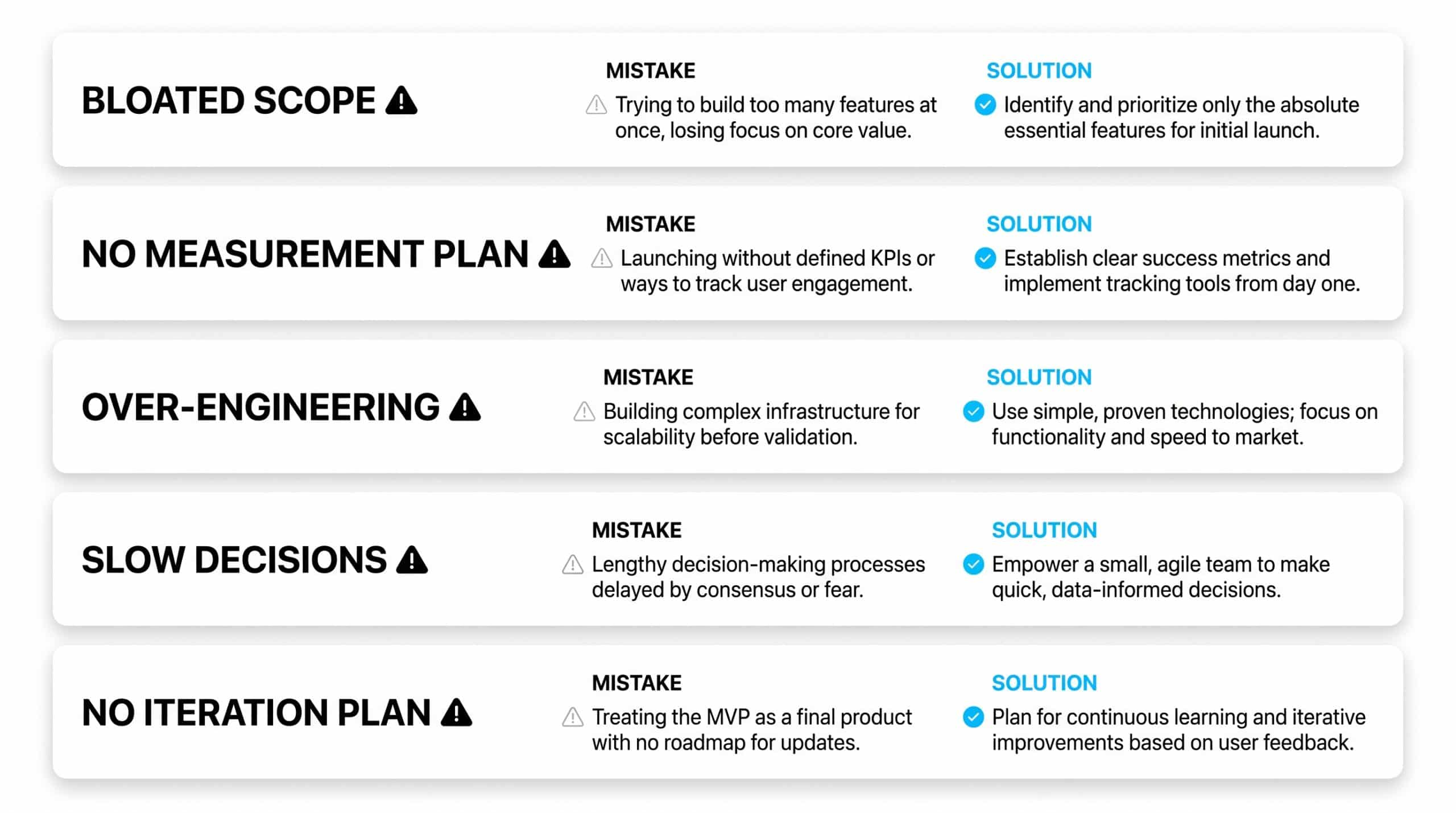

Common mistakes in MVP development and how to avoid them

Most MVPs don’t fail because of bad code. They fail because of bad scoping, missing measurement, over-engineering, or slow decision-making. The teams that succeed are ruthless about what doesn’t go in, decisive when data points to a change, and disciplined about instrumenting their product from day one.

| Mistake | Symptom | How to avoid it |

|---|---|---|

| Bloated scope | Multiple admin panels, heavy permissions, edge-case features from sprint one | Filter every feature against the North Star Action before adding it to the backlog |

| No measurement plan | Product launched with no analytics events or KPI baseline | Define KPIs and instrumentation plan before development starts, not after launch |

| Over-engineering | Microservices architecture for 100 users, custom infrastructure for 10 customers | Build for the next milestone, not a hypothetical future scale that may never arrive |

| Slow decisions | Sprints stalling on stakeholder reviews that take days instead of hours | Name a single decision-maker per workstream and agree on a response SLA before kickoff |

| No iteration plan | Launch is treated as the finish line; team disbands before learning begins | Plan and fund at least two post-launch sprints before launch day so momentum is never lost |

Must an MVP be scalable and secure from day one?

An MVP should be “scalable enough” and “secure enough” — proportional to the risk it carries and the data it handles. You don’t need infrastructure built for a million users when you’re onboarding your first hundred, but you do need foundational architectural choices that let you scale later without rewriting everything from scratch. The goal is avoiding decisions that foreclose future options, not building for a scale that doesn’t yet exist.

On security and privacy, the bar is higher than many founders realize. Managing personal data, even in a small pilot, carries legal obligations in most jurisdictions. A responsible tech partner builds basic authentication, encrypted data storage, access controls, backups, and monitoring into the MVP from the start — not as an afterthought in sprint six. Compliance frameworks like data protection impact assessments exist to help teams identify and mitigate risks early, before they become legal or reputational problems.

Which technology stack is most suitable for an MVP?

The best stack for an MVP maximizes development speed, talent availability, and long-term maintainability — not novelty. Proven frameworks with large communities, modern JavaScript and TypeScript ecosystems, established backend frameworks, and managed cloud services let your team move fast without painting themselves into a corner when it’s time to hire, scale, or hand off the codebase.

Niche or experimental technologies might generate excitement in engineering conversations, but they often slow hiring, complicate handoffs, and create technical debt before you’ve even validated the product. Sentice favors stack choices that are “boring” in the best possible sense: predictable, well-documented, widely understood, and aligned with where your team will need to grow next. The technology is a means to an end — the end being a validated product that attracts users, investors, and talent.

What should you prepare before contacting an MVP development agency?

Coming prepared shortens discovery dramatically and signals to your prospective partner that you’re a decisive, well-organized team — which in turn attracts better work and better rates. At minimum, have a clear problem statement, a defined target audience, the core hypothesis you want to test, and a short list of must-have features tied directly to that hypothesis. If you have early user research, competitive analysis, or rough wireframes, bring them — even imperfect thinking is useful context.

The clearer your inputs, the faster your team can move from kickoff to shipped product. Founders who arrive at the first meeting with a written one-page brief consistently get better estimates, tighter timelines, and more honest feedback than those who describe their vision verbally and expect the partner to extract the structure themselves. A small investment in preparation before outreach pays compounding returns throughout the engagement.

How Sentice helps your business move from idea to validated MVP

Different founders need different things from a tech partner, and the Sentice boutique model is designed to adapt to where you are — not force you into a one-size-fits-all engagement. The table below maps common business needs to how the Sentice approach addresses them in practice.

| Business need | How Sentice helps in practice |

|---|---|

| Move from vision to working product fast | Embedded senior teams that operate as a true extension of your team, with two-week sprint cadence and weekly demos from day one |

| Predictable budget and timelines | Hybrid pricing models, milestone-based deliverables, and transparent change control so there are no surprises mid-engagement |

| Confidence in technical decisions | Trusted advisors guiding stack choices, architecture, and scope trade-offs aligned with your business goals and growth trajectory |

| Built-in learning loop post-launch | Analytics instrumentation, monitoring, and an iteration roadmap delivered as part of the engagement — not sold as a separate retainer |

| Cultural and time-zone alignment | Boutique partnership model tailored to startups and scaleups, with senior, culture-aligned talent and no offshore hand-offs or black-box delivery |

How do you decide between iterating and rebuilding after the MVP?

Once your MVP has data, the next decision is whether to iterate on what you have or rebuild for scale. Iterate when the core architecture is sound, the team understands the codebase deeply, and the changes ahead are evolutionary rather than structural. The cost of iteration is low when the foundation was well-designed — which is why foundational choices in the MVP phase matter more than they appear to at the time.

Consider a rebuild when fundamental assumptions about the product have shifted significantly, when accumulated technical debt is actively blocking new features, or when the original stack cannot support the next phase of growth without prohibitive rework. The “Pivot or Persevere” decision should be driven by objective user performance data, not internal preferences or sunk-cost thinking. Ideally, this decision is reviewed with a partner who can give you an honest, independent technical and product perspective — one who has no vested interest in keeping the existing codebase alive.

At Sentice, we treat the post-MVP decision review as a formal milestone. We present the data, share our honest technical assessment, and help your team make the iterate-versus-rebuild call with full information — even when the answer changes the shape of our own engagement.

Frequently asked questions

How quickly can we start once we engage an MVP partner?

With a prepared brief and decision-makers in place, discovery typically starts within one to two weeks of contract signing. The biggest delay is usually internal — getting the right people aligned and available for kickoff workshops. Coming prepared with a written problem statement and target hypothesis eliminates most of that lag.

Can we build an MVP without a full design system?

Yes. A lightweight UI built on a proven component library — such as a well-maintained open-source design system — is usually sufficient for validation purposes. A full, bespoke design system can wait until you’ve proven product-market fit and know enough about your users to design for them with confidence. Investing in a design system before validation is a common form of premature optimization.

Do we need a CTO to work with an MVP development agency?

Not necessarily. A strong partner provides the technical leadership needed during the MVP phase — making architecture decisions, guiding stack choices, and managing the engineering team — and can also help you define the profile for a full-time CTO when the time is right. Many of our engagements begin before the founding team has a technical co-founder, and the embedded model bridges that gap effectively.

What happens to the code and IP after the MVP is delivered?

In a properly structured engagement, you own the code outright — including the repositories, all third-party configuration, infrastructure accounts, and every piece of intellectual property created during the project. This should be stated explicitly in the contract before work begins, not clarified after delivery. Ask to see the IP assignment clause before signing any agreement.

How involved do founders need to be during development?

Expect a commitment of a few hours per week for sprint demos, backlog reviews, and real-time decisions. The more available you are — particularly for questions that only the founder can answer — the faster the team can move. Founders who delegate the decision-making entirely consistently experience longer timelines and more rework than those who stay actively engaged at the review cadence.

What if our hypothesis is invalidated by the MVP?

That is a successful outcome. You’ve saved months of continued build time and preserved capital that would otherwise have gone into scaling something users don’t want. More importantly, you have concrete behavioral data to inform the next direction — whether that’s a pivot to an adjacent hypothesis, a fundamental rethink of the problem space, or the decision to pursue a different market entirely. Invalidation is information, and information is what you paid for.

Can the same partner continue building the product after the MVP?

Yes. Many Sentice engagements naturally extend into a long-term partnership as the product moves from validation to growth. The embedded model is designed to scale with your team — adding engineers, designers, and product specialists as your roadmap expands and your funding allows. There’s no hard handoff or knowledge-transfer tax when you decide to continue with the same team that built the foundation.

What is the difference between an MVP and a proof of concept?

A proof of concept (PoC) is typically an internal technical demonstration designed to answer “can we build this?” — it is rarely user-facing or production-ready. An MVP, by contrast, is a functional product delivered to real users, designed to answer “should we build this, and does it create value?” PoCs are useful for technical risk reduction; MVPs are useful for market validation. They serve different purposes and are appropriate at different stages of confidence.